Machine learning is a unique field that is rapidly evolving as the years progress; once optimal algorithms are often replaced within ten to twenty years to make way for more efficient methods. The Histogram of Oriented Gradients detection method (HOG for short) is one of these antique algorithms, being almost a decade old; however, one thing that differentiates it from others is that it is still heavily used today with fantastic results.

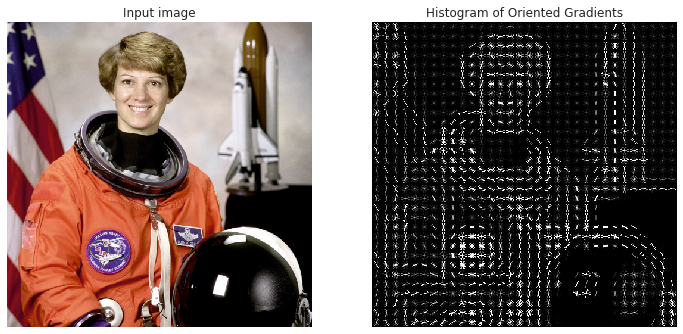

The Histogram of Oriented Gradients method is mainly utilized for face and image detection to classify images, like the one that you see above. This field has a numerous amount of applications ranging from autonomous vehicles to surveillance techniques to smarter advertising. This article will do a deep dive on the implementation behind the HOG detection method and understand why it is still so commonly used today. I hope you all are as interested as I am to get into the nitty-gritty of HOG, so let’s get started!

Note: This article does require a high level of understanding about machine learning and mathematical concepts like normalization, gradients, and polar coordinates, which can be a bit confusing once we get into it.

Feature Descriptors

You may hear this name pop a lot throughout this article, but feature descriptors simply mean the representation of an image that simply extracts the useful information and disregards the unnecessary information from the image.

In the case of HOG feature descriptors, we also convert the image (width x height x channels) into a feature vector of length n chosen by the user. Although it may be hard to view these images, these images will be perfect for image classification algorithms like SVMs in order to produce good results.

Now, you guys might be wondering how the HOG feature descriptor will actually sort through this unnecessary information. It simply does this by using the histogram of gradients which are used as the features of an image. Gradients are extremely important for checking for edges and corners in an image (through regions of intensity changes) since they often will pack much more information than flat regions.

Preprocessing

A key mistake that individuals often perform when doing HOG object detection is that they forget to preprocess the image so that it has a fixed aspect ratio. A common aspect ratio (width:height) is 1:2, so your images can be 100×200, 500×1000, etc.

For a particular image that you choose, make sure that you identify the section that you want so that it correctly fits the aspect ratio and allows for easier accessibility in the long run.

To make the HOG feature descriptor, as discussed above, we need to calculate the respective horizontal and vertical gradients to actually provide the histogram that can be used later in the algorithm. This can be done by simply filtering the image through these kernels:

Kernels like these are often used in image classification mainly in convolutional neural networks in order to find the edges and important points in a particular image. If you are interested in diving deeper into understanding kernels, here is an article that describes these kernels visually.

Then, the magnitude and the direction of the gradients can simply be found by using the following formulas (note that this is simply converting from Cartesian to Polar coordinates in a sense):

Now, while these formulas above may seem a bit daunting, just remember that much of this implementation will be handled by the computer, so there is no need for actually computing these gradients by yourself. If you are more interested in delving deeper into the mathematics behind calculating these gradients, I suggest that you check out this article here.

If you did not understand how the gradients were calculated, that’s perfectly fine. The main takeaway that you should get from gradients are that the magnitude of the gradient increases wherever there is a sharp change in intensity. The picture below highlights an optimal example of gradients do this as they fire around the edges of the images through these gradients. The unnecessary information is removed like the background and only the essential parts remain.

To summarize, understand that gradients have a magnitude and direction where the magnitude is calculated through the maximum of the magnitude of the gradients from the three color channels and the angle is calculated from the angle corresponding to the maximum gradient out of the three channels evaluated. These can produce images like the ones above where it can detect important information and disregard the unnecessary parts.

To move on to the next step of the HOG algorithm, make sure that the image is divided into cells so that the histogram of gradients can be calculated for each cell. For example, if you have a 64×128 image, divide your image into 8×8 cells (this will involve a bit of tweaking and guessing!).

Feature descriptors will allow for a concise and succinct representation of particular patches of the images; taking our example from above, an 8×8 cell can simply be explained using 128 numbers (8x8x2 where the last 2 are from the gradient magnitude and directional values). By further converting these numbers to calculate histograms, we allow for an image patch that is much more robust to noise and more compact.

For the histogram, make sure to split it up into nine separate bins, each corresponding to angles from 0–160 in increments of 20. Here’s an example of how an image with the respective gradient magnitudes and directions can look like (notice the arrows get larger depending on the magnitude).

Now, how do we decide where each pixel goes inside of the histogram? It’s simple. A bin is selected depending on the direction chosen, and the value that is subsequently placed inside of the bin is dependent on the magnitude. Note that if a pixel is halfway between two bins, then it splits up the magnitudes accordingly depending on their distance away from each respective bin. After performing this process, a histogram can be formed, and the bins that have the most weight can easily be seen.

Lighting variations are another major factor that can mess up how these gradients are calculated. For example, if the picture was darker by 1/2 of the current brightness, then the gradient magnitudes and subsequently the histogram magnitudes would all decrease by half. Therefore, we want our descriptor to be devoid of lighting variations so that it is unbiased and effective.

The typical process of normalization occurs by simply calculating the length of a vector through its magnitude and then simply dividing all elements of that vector with the length. For example, if you had a vector of [1,2, 3], then the length of the vector, using basic mathematical principles, would be the square root of 14. By dividing the vector by this length, you arrive at your new normalized vector of [0.27, 0.53, 0.80].

This process of normalization can be performed depending on your preference (whether you want to perform on the 8×8 block or even a larger 16×16 block). Just remember to first turn these blocks into element vectors so that the normalization highlighted above can be performed.

Image Visualization

In many instances, the HOG descriptors are often visualized with the image on the right in order to get an accurate representation of the shape of the person. This visualization can be extremely useful in understanding where the gradients shift and knowing where the objects are inside of the image.

The Histogram of Oriented Gradients object detection method can undoubtedly lead to great advancements in the future in the field of image recognition and face detection. From boosting AR tools to improving vision for blind individuals, this field can have a large impact on multiple industries.

The application that I’m the most excited for is in the field of autonomous vehicles. There are many different approaches to computer vision to detect vehicles and objects, so it makes sense that there aren’t many companies implementing HOG in their self-driving vehicles. I hope that some companies see the viability of HOG and utilize this in the future.

There aren’t many resources online explaining how to use HOG for image detection, so comment below if you all would be interested in getting a future article on the coding implementation of HOG!

- The Histogram of Oriented Gradients method (or HOG for short) is used for object detection and image recognition.

- HOG is based off of feature descriptors, which extract the useful information and discard the unnecessary parts.

- HOG calculates the horizontal and vertical component of the gradient’s magnitude and direction of each individual pixel and then organizes the information into a 9-bin histogram to determine shifts in the data.

- Block normalization can be further utilized to make the model more optimal and less biased.

- HOGs can be used everywhere from the field of autonomous vehicles to the field of AR and VR (mostly anything involving image detection).