Attaching meaning to digital data. A deep learning model will only perform as good as your training data.

One of the most time-consuming steps in the life cycle of an artificial intelligence algorithm is gathering data and preparing it. A deep learning model will only perform as good as your training data. And by that, I mean data that is appropriate to our task and annotated. Data annotation, also frequently referred to as data labeling, is for sure the hardest and longest step that will make your application fail or succeed.

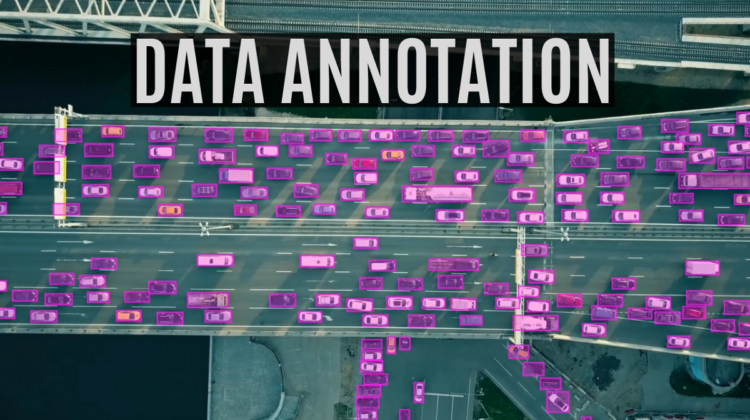

Data labeling is the process of attaching meaning to digital data. This process can be manual but is usually performed or assisted by software and requires a human touch. Data labeling is the most important part of data preprocessing for machine learning algorithms, particularly for supervised learning, in which both input and output data are labeled for classification to provide a learning basis for future data processing. These labels can take many forms, such as image annotations, text annotations, video annotations, and even audio annotations. In short, it is basically any additional information about the data fed to our algorithm that tunes it to achieve precise and desired results.

For example, if we take a road safety system, and we train it to identify whether someone is looking at the road, or not while driving. We could be provided with multiple videos of various drivers and cars annotated, looking like this one, where it would have more information about where the face of the person is, and more specifically where his eyes are. From these video examples, the algorithm would learn the common features of each, which in this case would be to understand where the person is looking, enabling it to correctly identify if the person is looking at the road in unseen and unlabeled images.

We know that deep learning systems often require massive amounts of data to establish a foundation for reliable learning patterns. We need thousands of training images, even for a simple application, like a model able to differentiate a dog from a cat. The data they use to inform learning must be labeled or annotated, which means that either everything or only the most important things, must be identified or localized in the image. This is often called the “ground truth” for our deep learning algorithm. Which is what we want it to be able to find about the images fed. More, it must be labeled based on data features that help the model organize the data into patterns that produce the desired answer. Such as the name of the class in this basic example, which is a “cat” or “dog”. A properly labeled dataset provides a ground truth that the machine learning model uses to check its predictions for accuracy and to continue refining its algorithm. Which is crucial to make our overall model better.

Errors in data labeling impair the quality of the training dataset and the performance of any predictive models it is used for. To mitigate this, many companies, such as Keymakr, take a human-in-the-Loop (HITL) approach. This role is often called a “Data Labeler”, maintaining human involvement in training and testing data models throughout their iterative growth. This humanized approach is essential to create a good dataset and ensure there are no such errors that can penalize our algorithm.

Just look at the fine details and attention required to create such a great dataset from satellite photos and GPS data, annotating each element with the highest precision possible. A good algorithm with a poorly annotated dataset will perform poorly with low recognition rates. Therefore, we need a well-labeled dataset, with the least number of artifacts possible, which can be extremely hard to create when you are not an expert at labeling data.

Manual data labeling is the most time-consuming and expensive method, but it is needed for many important applications, such as autonomous vehicles, brain tumor recognition, facial recognition, and more. Fortunately for us, some companies are specialized in this service, like Keymakr. Such a company is able to provide you the data annotations you are looking for to create your ideal algorithm. Just look at how many details are required in the annotations here made by the team at Keymakr. Creating such a complete and precisely annotated dataset yourself would take hundreds of hours of work that you could put into your research and testing of your application.

Different kinds of data labeling

The simplest and most common data labeling type is classification, where we classify the images by the object’s it contains. Then, there are more complex and complete annotations for harder problems, such as semantic segmentation, bounding boxes, skeletal (mesh) annotations, and so on.