After watching Boston Dynamics’ viral new video: a herd of dancing robots with better moves than I could ever imagine, it’s hard not to envision a world where lifelike supercomputers take over. That may have been my first thought, but much of the world believes the same. Tech magnates like Elon Musk and Sundar Pichai, the founders of OpenAI and DeepMind, are staunch proponents of regulating artificial intelligence. But what’s behind such precautions?

AI anxiety stems from three fears: mass unemployment, an absent ethical code, and a general misunderstanding of new technology.

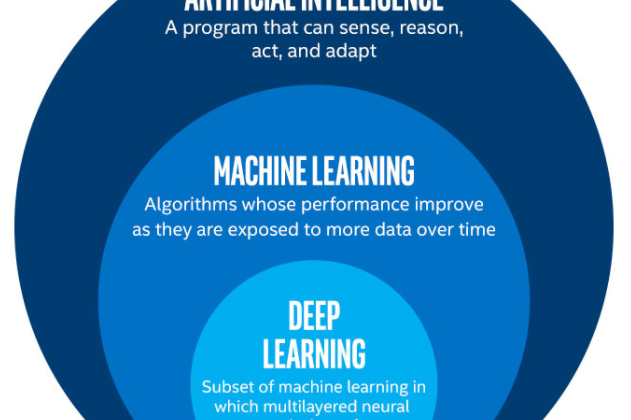

There are so many different words that are commonly but incorrectly used in place of artificial intelligence. You’ve probably heard of these phrases: machine learning, neural networks, and deep learning. In order to decipher just how capable Artificial Intelligence is and will be, we must have a clear distinction between these terms.

Artificial intelligence is an overarching phrase that is used to describe how computers can perform tasks that humans are well- suited for. These tasks include speech and image recognition, problem-solving, learning, and planning. Within this umbrella term, there is machine learning, which describes algorithms whose performance improves as they are exposed to more data over time. This definition is pretty reassuring because algorithms are just a set of rules to be followed in order to solve problems.

But the human brain, unlike computers, is hardly a rule-following engine — our thoughts are generated through complex electrical signals that propagate through thousands of neurons. This is where Deep Learning enters. In order for a computer to truly reach human capabilities, we must model our brains’ neural networks. Deep Learning is a subset of machine learning in which multilayered neural networks learn from vast amounts of data.

At this point in history, deep learning is the closest we can get to recreating the human brain, but it is still the first of three stages on the path to human-like capabilities. Deep learning, including almost every other AI algorithm in use today, falls under a term called artificial narrow intelligence (ANI). This just means that the program is engineered to perform only one task well. Examples include natural language processors like Siri and Alexa, Netflix’s movie recommendation engine, and Tesla’s self-driving cars. If you’ve used any one of these technologies, you should know that they are far from replicating what humans are capable of.

The next stage in the future of AI is Artificial General Intelligence (AGI) or deep AI, not to be confused with deep learning — a subset of machine learning, followed by Artificial Superintelligence (ASI). While AGI aims to solve any problem in a way that’s indistinguishable from humans, ASI refers to a machine that “greatly exceeds the cognitive performance of humans” according to Nick Bostrom who coined the term. Both AGI and ASI are far from reality and according to Hubert Dreyfus, a well-known AI critic at MIT, the subconscious processes that exist in our mind can never be captured in formal rules. So if deep learning is the closest scientists have come to recreating the human brain, how exactly does it work?

As in any broad term, there are different types of deep learning: generative adversarial networks (GANs), Autoencoders, Recurrent Neural Networks, Convolutional Neural Networks (CNNs), Reinforcement Learning, and Natural Language Processing (NLP).