Welcome! In this blog, I will be teaching you to design your first deep learning architecture. Although this blog is designed for beginners, I am sure it will brush up a few fundamentals of yours if you’ve been developing deep learning architectures, lately! So, let’s get started!

We all must have started our journey into the domain of computer science and programming by writing our debut program of printing “Hello World” on STDIN but did you do the same while starting your journey in training a deep neural network? If not, no worries at all as you will be creating a “Hello world deep learning model” by end of this blog. Deep learning models are similar to structures we used to make in our childhood using lego blocks.

The core lego blocks in deep learning neural network structures can be regarded as Core layers block(Input, Dense, Embedding etc.), Convolutional block, Pooling block(Max Pooling, Average Pooling, Global Max Pooling, Global Average Pooling), Activation block(Softmax, Sigmoid, ReLU, Leaky-ReLU, PReLU etc.), Reshaping block(Flatten, Reshape, Cropping, Upsampling, ZeroPadding etc.), Merging block(Concatenate, Add, Mult, Sub, Dot, Average, Max, Min etc.), Normalisation block(Batch normalisation, Layer normalisation), Regularization block(Dropout, Spatial Dropout etc.), Recurrent block(LSTM, GRU etc.) and Attention block. I hope that you are aware of the functionality of these blocks and refer to their usage in detail out here .

These blocks operate in various spatial domains(1D,2D and 3D) and their dimensionality are often dependent on the Input subblock of Core Layers block’s dimensionality which is a container of Input to the deep neural networks. The dimensionality of input also varies as per the nature of input signal like- 3D for RGB image, 2D for Grayscale image, (4-n) D for temporal data(RGB videos, CT Scans or Meshes).

The core ingredients for our “Hello world” deep neural network comprises of Core Layers blocks(Input and Dense), Convolutional blocks, Activation blocks, Normalization blocks, Pooling blocks and Reshaping blocks.

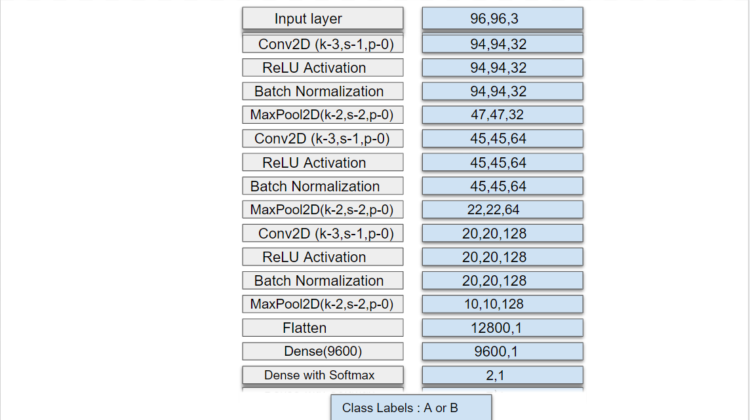

Let’s use these blocks to train a model on a dataset comprising of n – 3D input samples(Assume 96*96*3) labelled into two classes- A and B. Clearly, we will be using our deep neural network towards classifying a given input 3D signal into one of the aforementioned classes. The structure of the deep learning model can be viewed below in Figure 1.

In Figure 1, The stack on the left shows the network architecture built using the ingredients a.k.a blocks and the stack on the right shows the output of/after each layer. It is to be noted that the dimensions of the tensor/array remain unchanged after being passed through Normalisation or Activation block(Batch Normalisation and ReLU Activation). However, the output dimension after Convolutional and Pooling block can be calculated using the formula mentioned in Figure 2 and cross-checked with the dimensions in the right stack of Figure 1. The flatten layer from the Reshaping block, flattens the input to a one-dimensional array whereas Dense layer from the Core layers block takes an (n1,1) array and outputs (n2,1) dimensional feature vector. The dense layer block in the last layer is paired with softmax activation to output the probability of the two classes which in turn can be applied to the argmax function to get the one-hot encoding of the class label.

To be noted: The formula contains a ceiling function and n,p,f and s in the formula below correspond to n(input size) being fed to a block, padding(p), kernel/filter_size(k) and stride(s).

The network has been created using Keras Deep Learning API and made available on Google Colab and Github.

Thank you!