The choice of a data source fell on the largest Russian social network — VK. And here is why: VK has a post suggestion mechanism, where people can suggest their own content to be published in large groups. This leads VK to contain a lot more thematic visual content than other social networks. For example, the largest group about the aesthetics of Russian ghettos contains around 200 thousand images!

For data scrapping, I used a python wrapper for VK API. In case one would like to reproduce my pipeline, here are API methods descriptions, and below is an example of how to download images from the latest group post.

To filter downloaded images I used EfficientDet and NLTK. The idea of using a NN detector is to find all objects that it can find and throw out those images leaving only landscapes footage. Natural Language Toolkit a bit helped me to filter out posts by their captions using stemming. Finally, I got around 10k images of Russian landscapes.

I used the famous Nvidia architecture StyleGAN2 with decoder augmentations. Authors claim that augmentations allow better results on small datasets (under 30k images). Here is the official code, and here is my “duct tape” modification for Colab training and video generation (will expand on it below).

The official paper implementation uses the .tfrecord format to store data for training (how to convert images to .tfrecord is described here). When running on Colab this feature can drastically reduce the number of images that you could process because the official code doesn’t use any compression in tfrecords. However, there is another modification that uses compression and probably should allow you to work with larger datasets on Colab (I didn’t try it yet).

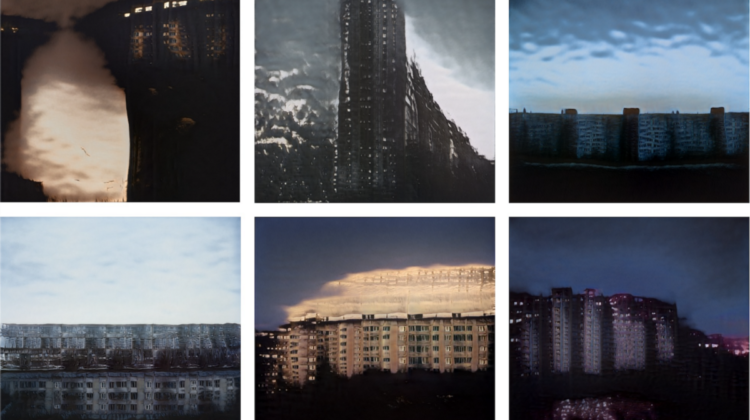

On Colab each epoch is taking about half an hour for a dataset of 10k images in 1024 resolution. Fun fact: on the very first epochs the colormap has rapidly transformed from random bright colors into 50-shades-of-gray 🙂

When the first impressive results have achieved an idea to create a music video for my friends-musicians emerged. But how to make the video at least a bit consistent with music? Here the latent space of the generative model may help.

The thing is, during training, the neural network has learned how to describe a whole picture with a vector of dimensionality 512. And all the parameters of the final painting are embedded in this vector. So one may find a combination of those parameters that correspond to, for example, time of day, or height of the building, or the number of windows. This may be a complex task itself (however, there are some approaches), so for my purposes, I decided to simply interpolate between images keyframe-to-keyframe using their latent vectors. For this purpose, I changed only a few lines of code in generate.py to make it read a list of keyframes with corresponding latent vectors and generate N intermediate frames linearly interpolating between them with 60fps.

With the advent of such approaches as StyleGAN, generative art received a new round of growth. Some are even selling generative art on Christie’s auction without writing a line of code. Also, Colab drastically reduces the entry-level and the amount of time to make something interesting work. Hope that this article also will help someone to expand their creativity using modern AI methods.