Safety and efficacy are of paramount importance in the development of medical devices. To its credit the U.S. Food and Drug Administration (FDA) has spent the last few decades developing and refining a highly structured and largely consistent framework for medical device evaluation. The central anchor of this framework are the User Needs including the Intended Use and the Indications for Use. The Intended Use is what the medical device is used for; and the Indications for Use are the set of reasons, circumstances, and environment for which the device is used.

Medical devices are never approved by the FDA for nonspecific usage. Instead, they are approved for use in a highly specific context, clinical setting, demographic, user group, hardware accessory, etc. These attributes together are the Indications for Use of the device and they define the scope within which the FDA deems the device to be safe and effective. As such, any academic exercise that sets out to evaluate the safety and efficacy of an approved FDA device must do so within the device’s Indications of Use (or reasonably foreseeable misuse). This is especially important in Artificial Intelligence, an area of rapidly expanding importance and one whose many stakeholders need to be properly informed.

The “real world” for an approved medical device is defined by its Intended Uses as well as its Indications for Use, together constituting the User Needs. Additionally, the “real world” also extends into reasonably foreseeable misuses of the medical device. As such, AI Software as a Medical Device (AI SaMD) manufacturers need to seriously take reasonably foreseeable misuses into account. Such considerations are central to the design process and are included in a proper risk management plan. The International Standard Organization’s ISO 14971:2019 document specifies how risk management is to be handled during the manufacture of medical devices. This document under-guards the overall process of medical device development and dovetails with Design Controls 21 CFR 820.30, Software Development Lifecycle IEC 62304, Usability IEC 62366, and FDA Guidance document on Human Factors Validation Testing.

Design Controls are the backbone of the overall framework of medical device development and regulation. 21 CFR 820.30 describes Design Controls and specifies that they are required for any medical device marketed in the U.S. Figure 1 below illustrates the Design Controls waterfall diagram.

In the Design Control framework, every User Need for a device is translated into a Design Input, i.e. a technical description of the desiderata. This in turn is then engineered — the design process — to yield the corresponding design output, an attribute or component of the medical device. Each design output, however, must be checked to ensure that it matches the corresponding design input that specified it. This checking process is called Verification. The aggregation of all design outputs is the finished device which must itself be Validated by checking that it aligns with and satisfies the User Needs. In other words the Intended Uses and Indications for Use must be satisfied that the device fulfills its raison d’etre, the purpose for which it was designed. Clinical trials are an example of medical device validation.

Improper validation of AI medical devices is a public safety concern because it can either make a good device look bad or a bad device look good. The concept of ‘safety and efficacy’ is inextricably linked to the Intended Use and the Indications for Use of the AI Software Medical Device.

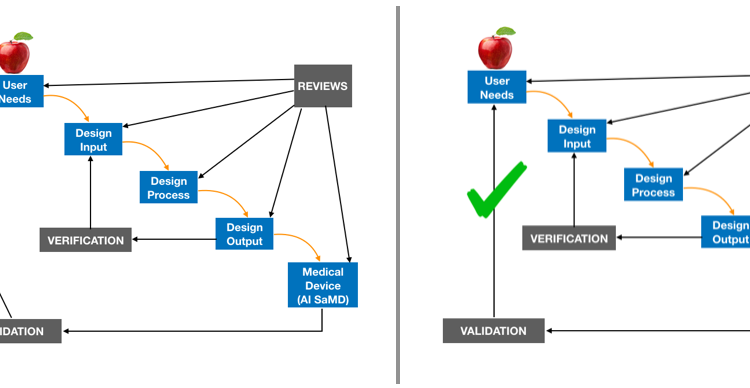

The validation step is where some performance evaluation studies may err. For instance, as illustrated in Figure 2 below, on the left is improper validation. The performance evaluation study does not take into account of match the Intended Use and Indications for Use of the medical device it is looking to assess. Improper validation compares apples to oranges. This is problematic because it runs the risk of erroneously concluding that devices that are safe and effective are not. Similarly, improper validation runs the risk of declaring an unsafe and ineffective medical device as safe and effective. Therefore properly matching the performance assessment to the Intended Use and Indications of Use of a subject medical device is a matter of public safety.

There is always good reason to evaluate approved medical devices outside of their Intended Use and Indications for Use. Furthermore, this process is critically important and fits inside the framework of risk management. However, what is not scientifically sound is to evaluate an AI SaMD outside of its Indications for Use and Intended Use (or reasonably foreseeable misuse) without explicitly acknowledging this deviation; and without contextualizing the results of such an exercise. Improper validation of AI medical devices is a public safety concern because it can either make a bad device look good or a good device look bad. The concept of ‘safety and efficacy’ is inextricably linked to the Intended Use and the Indications for Use of the AI Software Medical Device.

Medical AI software devices like all medical devices are always approved by the FDA for use in a highly specific and well-defined scope called the Intended Use and Indications of Use. The performance of approved devices should be evaluated within their particular Intended Uses and Indications of Use (or reasonably foreseeable misuses). And whenever their performance is evaluated clearly outside of this scope, the reasons for and results of such an exercise should be clearly contextualized and clearly communicated.

BIO: Dr. Stephen G. Odaibo is CEO & Founder of RETINA-AI Health, Inc, and is on the Faculties of the MD Anderson Cancer Center. He is a Physician, Retina Specialist, Mathematician, Computer Scientist, and Full Stack AI Engineer. And he is the first Ophthalmologist in the world to obtain advanced degrees in both Mathematics and Computer Science. In 2017 Dr Odaibo received UAB College of Arts & Sciences’ highest honor, the Distinguished Alumni Achievement Award. And in 2005 he won the Barrie Hurwitz Award for Excellence in Neurology at Duke Univ School of Medicine where he topped the class in Neurology and in Pediatrics. He is author of the books “Quantum Mechanics & The MRI Machine” and “The Form of Finite Groups: A Course on Finite Group Theory.” Dr. Odaibo Chaired the “Artificial Intelligence & Tech in Medicine Symposium” at the 2019 National Medical Association Meeting. Through RETINA-AI, he and his exceptionally talented team are building AI solutions to address the world’s most pressing healthcare problems. He resides in Houston Texas with his family.

REFERENCES:

1. 21 CFR 820.30: Design Controls

2. Applying Human Factors & Usability Engineering to Medical Devices

3. ISO 14971: Application of Risk Mgt to Med Devices

4. IE 62304: Medical Device Software Development Lifecycle