the weight associated with, say, x2, to say y4 or y2 to z1 are basically pointless to the results of the calculation, so as a result, it makes total sense to prune those, so for the next however many epochs, that value doesn’t need to be calculated. On 1,000 epochs, that saves 2 calculations per epoch just by eliminating 2 synapses for a total of 2,000 calculations saved on a tiny network that in 1,000 epochs will be 16,000 calculations for a net saving of 12.5%, on a bigger network, those numbers could easily be way bigger, and the net saving, much larger.

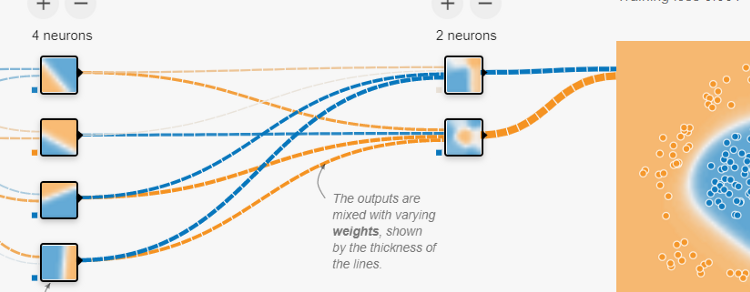

An example might look like this-

On 1,000 epochs, this is the difference between 12,000 calculations, versus 42,000. That’s a net saving of over 71% of compute, that is, assuming this pruning doesn’t degrade the results of the network (or at least, not by too much)

Conclusion — I guess an easier way to explain it is, human brains do it — probably to save and better allocate resources because I don’t think human brains operate in the same serialized nature computers do, but I guess the underlying reason is the same — save resources. The underlying computing reason is no different to taking a big file and zipping it, though, this doesn’t make sense until later until the path of computation in this neural network is well understood. So, this is a much later consideration, but still, one that results in huge optimization.