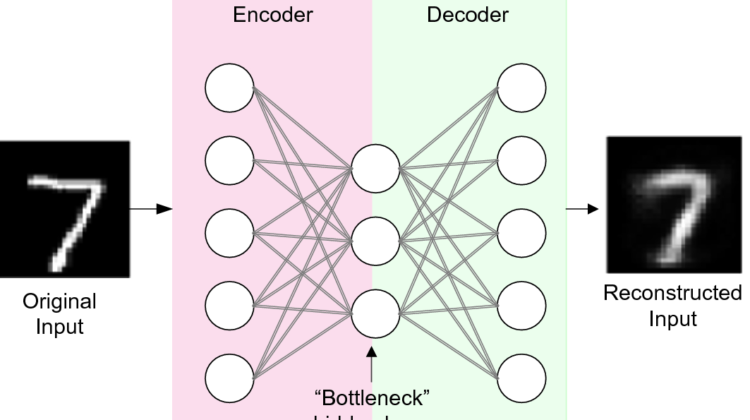

Auto-Encoder is an unsupervised learning algorithm in which artificial neural network(ANN) is designed in a way to perform task of data encoding plus data decoding to reconstruct input.

No worries!! There are some terminologies used in above definition which makes it complex to understand for beginners and people who are not from this domain. To understand it in a better way, first understand below terminologies-

- Unsupervised Learning- It is a technique of machine learning to train a machine on unlabeled data without any guidance. Model is allowed to work on its own and discover new patterns from data. Clustering and association are two categories of unsupervised algorithm.

- Artificial Neural Network (ANN)- It is a simple mathematical model of the brain which is used to process nonlinear relationships between inputs and outputs in parallel like a human brain does every second.

3. Data Encoding and Decoding- Data encoding is to map (sensory) input data to a different (often lower dimensional, compressed) feature representation. And Data Decoding is to map the feature representation back into the input data.

Now, in simple words –

Auto-encoder is a complex mathematical model which trains on unlabeled as well as unclassified data and is used to map the input data to another compressed feature representation and from that feature representation reconstructing back the input data.

Autoencoders can be used to remove noise, perform image colourisation and various other purposes like –

- Dimensionality Reduction : Dimension Reduction refers to the process of converting a set of data having vast dimensions into data with lesser dimensions ensuring that it conveys similar information concisely.

- Image -Denoising : A noisy image can be given as input to the autoencoder and a de-noised image can be provided as output. The autoencoder will try de-noise the image by learning the latent features of the image and using that to reconstruct an image without noise. The reconstruction error can be calculated as a measure of distance between the pixel values of the output image and ground truth image.

- Feature Extraction : Once the model is fit on training dataset, the reconstruction (decoding) aspect of the model can be discarded and the model up to the point of the bottleneck can be used (only the encoding part is required). The output of the model at the bottleneck is a fixed-length vector that provides a compressed representation of the input data.

- Data Compression : It is a process to reduce the number of bits needed to represent data. Compressing data can save storage capacity, speed up file transfer, and decrease costs for storage hardware and network bandwidth. Auto-encoders are able to generate reduced representation of input data.

- Removing Watermarks from Images

- An autoencoder learns to capture as much information as possible rather than as much relevant information as possible.

- To train an autoencoder there is need of lots of data, processing time, hyperparameter tuning, and model validation before even start building the real model.

- Trained with “back-propagation technique” using loss-metric, there are chances of crucial information loss during reconstruction of input.

Extra Cheese 🙂 – Auto-encoder’s structure looks like a diabolo, due to which it is also named as Diabolo network.

More curious for the implementation part of Auto-encoders? Please refer the link to see how auto-encoders are used for Image-denoising.

Thanks for reading. Happy Learning!!