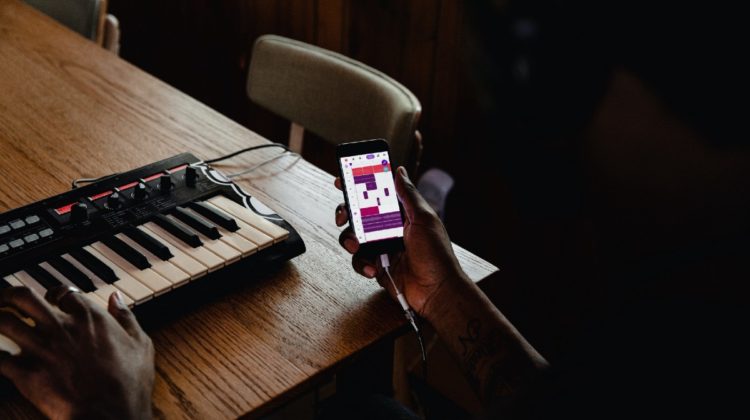

Spotify is launching Soundtrap for Music Makers at the moment. Following Soundtrap for Storytellers — a mobile app for creating podcasts — Soundtrap for Music Makers enables you to produce music on your phone or in the web browser. It also allows you to collaborate with other artists. Besides not being the most comfortable thing making music on a small smartphone display the app sheds some light on where music making is going in the future.

When asked about music production on the laptop Oak Felder once said:

“at the end of the day I could make something that sounds just as good as something that I would do in this studio”.

The shift from owning a studio with lots of hardware towards sitting in your bedroom with a laptop on your knees making beats that will get millions of streams has long happened. It is somewhat the democratization of music enabling everyone to participate in professional music making who is willing enough to geek into the nitty-gritty details of music production techniques. But that is not the end of the story yet:

In 2016 the plugin manufacturer iZotope introduced the track assistant in their Neutron Suite, which intelligently mixes audio through an AI. Since then, other AI music assistants like Ozone for mastering followed. A similar approach can be found at the webservice LANDR. While not being perfect yet these tools give a huge advantage to inexperienced music producers, lowering the entry-barrier for making music that can compete on some level with professional music.

But what about the musical side of things?

A lot of the music today is already heavily sample based, meaning that producers take small audio snippets and arrange those bits of music into a song structure. Compared to a musician who had to practice an instrument for years to produce a somewhat coherent sequence of sounds this again lowers the entry-barrier quite a bit. Plugins like Captain Chords help the musically inexperienced to find chord-progressions — again a task that depending on the complexity of the sequence would otherwise take some time practicing.

Especially the field of musical assistance is predetermined to become an AI domain: AI models such as Aiva and OpenAI’s Jukebox have created some headlines in the past, for generating music. Even though the musical content generated by the so far AI’s has been far from a satisfying musical experience (unless heavily curated and re-worked by human artists), the progress in that field is amazing and it has inspired artists to ‘collaborate’ with AIs already by putting the musical bits produced by the AI in a somewhat enjoyable structure.

This is where the next shift in music production is going to happen – AI assisting humans on a musical level, suggesting arrangements for a given melody or helping to produce similar sounds or styles. It is only a matter of time until this will become a substantial part in music making.

To me, this is reminiscent of the development in visual arts that happened years ago, where producing a photo-realistic picture used to be a hard earned craft while today every smartphone has a high quality camera. It did not make artists obsolete. It might even have attracted a broader audience — some people that wouldn’t have looked into the artform otherwise. But it certainly changed the perspective we have on visual arts and what we feel is valuable about it. A similar thing is arguably happening in music right now.

In that sense Spotify for Music Makers is an attempt of giving every smartphone for music what a camera was for photography. The app might not have the power and flexibility yet. But combined with musical AI assistants we certainly will see music become a more and more approachable artform: By leaving a lot of the technical issues to the AI it will be less about technical decisions and more about stylistic and creative choices.

This is when making a song might function more like creating a Tik Tok video…