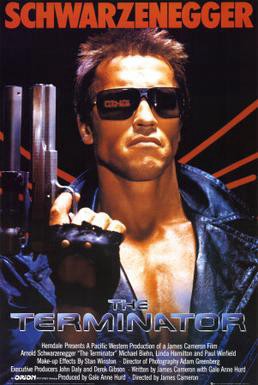

Like a lot of people, I watched and enjoyed the original ‘The Terminator’ movie starring Arnold Schwarzenegger and Linda Hamilton — a great example of AI gone amok.

The movie The Terminator was terrifying on various levels, not the least of which was the popularization on a worldwide scale of the idea that artificial intelligence (AI) could eventually run amok and result in an existential threat to the very survival of the human race.

The Terminator certainly painted a very compelling picture of the potential catastrophe that AI might unleash. The movie was not only great entertainment but also made people start to think seriously about an extremely important “What If?” scenario.

The public debate about the dangers of AI versus its overall benefits to humanity has grown in intensity over the years since Terminator first burst onto the scene. That’s primarily because AI has gone from the realm of fictional, theoretical speculation to actual inventions with practical use in real-world life, from fairly simple robot carpet cleaners to complex robots that can talk, think, and even act upon those thoughts using rules-based, analytical decision making processes programmed into them.

“Artificial intelligence (AI), is intelligence demonstrated by machines, unlike the natural intelligence displayed by humans and animals. Leading AI textbooks define the field as the study of “intelligent agents”: any device that perceives its environment and takes actions that maximize its chance of successfully achieving its goals. Colloquially, the term “artificial intelligence” is often used to describe machines (or computers) that mimic “cognitive” functions that humans associate with the human mind, such as “learning” and “problem-solving.”

The field was founded on the assumption that human intelligence “can be so precisely described that a machine can be made to simulate it.” This raises philosophical arguments about the mind and the ethics of creating artificial beings endowed with human-like intelligence. These issues have been explored by myth, fiction, and philosophy since antiquity. Some people also consider AI to be a danger to humanity if it progresses unabated. Others believe that AI, unlike previous technological revolutions, will create a risk of mass unemployment.

The traditional problems (or goals) of AI research include reasoning, knowledge representation, planning, learning, natural language processing, perception, and the ability to move and manipulate objects. General intelligence is among the field’s long-term goals. Approaches include statistical methods, computational intelligence, and traditional symbolic AI.

“Many tools are used in AI, including versions of search and mathematical optimization, artificial neural networks, and methods based on statistics, probability, and economics. The AI field draws upon computer science, information engineering, mathematics, psychology, linguistics, philosophy, and many other fields.” — Wikipedia

The great fear is that AI will develop the ability to build upon its programmed knowledge and start to further learn and reason of its own accord, without any need for human input and/or interaction. This has already started to happen in some instances, at least at a rudimentary level. We can expect with near certainty that “rudimentary” will gradually evolve to a “complex” level, resulting in developments and consequences both foreseen and unforeseen.

The path that leads to a highly evolved AI which can exist without any need for humans, presents the greatest danger to humanity in the long term. With that potential but increasingly likely outcome in mind, many people are now grappling with how to deal with such an eventuality, including ideas about ethics-based design, development, and introduction of AI technology to mitigate the risks to humans.

The ongoing debate about the future direction of AI is not only healthy but absolutely essential.

These discussions and any decisions that result from them will help determine what safeguards must be put in place to help ensure that mankind continues to benefit from AI without also facing any serious risk that a horrific “Terminator effect” would be unleashed upon us.

NOTE:

Artificial intelligence (AI), is intelligence demonstrated by machines, unlike the natural intelligence displayed by humans and animals, which involves consciousness and emotionality. The distinction between the former and the latter categories is often revealed by the acronym chosen. ‘Strong’ AI is usually labeled as AGI (Artificial General Intelligence) while attempts to emulate ‘natural’ intelligence have been called ABI (Artificial Biological Intelligence). Leading AI textbooks define the field as the study of “intelligent agents”: any device that perceives its environment and takes actions that maximize its chance of successfully achieving its goals. Colloquially, the term “artificial intelligence” is often used to describe machines (or computers) that mimic “cognitive” functions that humans associate with the human mind, such as “learning” and “problem-solving.”

As machines become increasingly capable, tasks considered to require “intelligence” are often removed from the definition of AI, a phenomenon known as the AI effect. A quip in Tesler’s Theorem says “AI is whatever hasn’t been done yet.” For instance, optical character recognition is frequently excluded from things considered to be AI, having become a routine technology. Modern machine capabilities generally classified as AI include successfully understanding human speech, competing at the highest level in strategic game systems (such as chess and Go), autonomously operating cars, intelligent routing in content delivery networks, and military simulations.

Artificial intelligence was founded as an academic discipline in 1955, and in the years since has experienced several waves of optimism, followed by disappointment and the loss of funding (known as an “AI winter”), followed by new approaches, success, and renewed funding. After AlphaGo successfully defeated a professional Go player in 2015, artificial intelligence once again attracted widespread global attention. For most of its history, AI research has been divided into subfields that often fail to communicate with each other. These sub-fields are based on technical considerations, such as particular goals (e.g. “robotics” or “machine learning”), the use of particular tools (“logic” or artificial neural networks), or deep philosophical differences. Sub-fields have also been based on social factors (particular institutions or the work of particular researchers).

The traditional problems (or goals) of AI research include reasoning, knowledge representation, planning, learning, natural language processing, perception, and the ability to move and manipulate objects. General intelligence is among the field’s long-term goals. Approaches include statistical methods, computational intelligence, and traditional symbolic AI. Many tools are used in AI, including versions of search and mathematical optimization, artificial neural networks, and methods based on statistics, probability, and economics. The AI field draws upon computer science, information engineering, mathematics, psychology, linguistics, philosophy, and many other fields.

The field was founded on the assumption that human intelligence “can be so precisely described that a machine can be made to simulate it”. This raises philosophical arguments about the mind and the ethics of creating artificial beings endowed with human-like intelligence. These issues have been explored by myth, fiction, and philosophy since antiquity. Some people also consider AI to be a danger to humanity if it progresses unabated. Others believe that AI, unlike previous technological revolutions, will create a risk of mass unemployment.

In the twenty-first century, AI techniques have experienced a resurgence following concurrent advances in computer power, large amounts of data, and theoretical understanding; and AI techniques have become an essential part of the technology industry, helping to solve many challenging problems in computer science, software engineering, and operations research.”

__________________

Thanks for reading.

These are my most popular pieces, with many thousands of Reads: