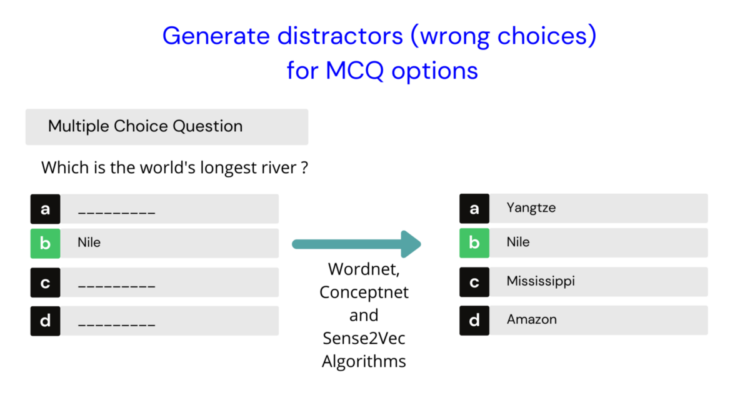

Using Wordnet, Conceptnet, and Sense2vec algorithms to generate distractors

Distractors are the wrong answers in a multiple-choice question.

For example, if a given multiple choice question has the game Cricket as the correct answer then we need to generate wrong choices (distractors) like Football, Golf, Ultimate Frisbee, etc.

Multiple-choice questions are the most popular assessment questions created whether it is for a school test or a graduate competitive exam.

Coming up with efficient distractors for a given question is a very time-consuming process for question authors/teachers. Given the increased volume of workload on teachers/assessment creators due to Covid, it would be very helpful if we can create an automated system to create these distractors.

In this article, we will see how we can use Natural Language processing techniques to solve this practical problem.

In particular, we will see how we can generate distractors using 3 different algorithms –

Distractors shouldn’t be too similar or too different. Distractors should be homogeneous in content. Eg: Pink, Blue, Watch, Green makes it obvious that Watch might be the answer.

Distractors should be mutually exclusive. So shouldn’t include synonyms.

Let’s get started.

- WordNet® is a large lexical database of English.

- Similar to thesaurus but captures even broader relationships between words.

- WordNet is also free and publicly available for download.

- WordNet labels the semantic relations among words. Eg: Synonyms — Car and Automobile

- WordNet also captures the different senses of a word. Eg: Mouse could mean an animal or computer mouse.

Generating distractors using Wordnet

If we have a sentence “ The bat flew into the jungle and landed on a tree” and a keyword “bat”, we automatically know that here we are talking about the mammal bat that has wings, not a cricket bat or baseball bat.

Although we humans are good at it, the algorithms are not very good at distinguishing one from the other. This is called word sense disambiguation (WSD).

In Wordnet “bat” may have several senses (meanings) one for a cricket bat, one for a flying mammal, etc. Word sense disambiguation using NLP is a separate topic in itself so for this tutorial, we assume that the exact “sense” is manually identified by the user or the top sense is chosen automatically.

Let’s say we get a word like “Red” and identify its sense, we then go to its hypernym using Wordnet. A hypernym is a higher level category for a given word. In our example, color is the hypernym for Red.

Then we go to find all hyponyms (sub-categories) of color which might be Purple, Blue, Green, etc all belonging to the color group. So we can use Purple, Blue, Green, as distractors (wrong answer choices) for the given MCQ which has Red as the correct answer. Purple, Blue, Green are also called as Co-Hyponyms of Red.

So using Wordnet, extracting co-hyponyms of a given word gets us the distractors of that word. So in the image below, the distractors of Red are Blue, Green, and Purple.

Wordnet Code

Let’s start by installing NLTK

!pip install nltk==3.5.0

Import wordnet

import nltk

nltk.download('wordnet')

from nltk.corpus import wordnet as wn

Get all distractors for the word “lion”. Here we are extracting the first sense of the word Lion and extracting co-hyponyms of the word lion as distractors.

# Distractors from Wordnet

def get_distractors_wordnet(syn,word):

distractors=[]

word= word.lower()

orig_word = word

if len(word.split())>0:

word = word.replace(" ","_")

hypernym = syn.hypernyms()

if len(hypernym) == 0:

return distractors

for item in hypernym[0].hyponyms():

name = item.lemmas()[0].name()

#print ("name ",name, " word",orig_word)

if name == orig_word:

continue

name = name.replace("_"," ")

name = " ".join(w.capitalize() for w in name.split())

if name is not None and name not in distractors:

distractors.append(name)

return distractorsoriginal_word = "lion"

synset_to_use = wn.synsets(original_word,'n')[0]

distractors_calculated = get_distractors_wordnet(synset_to_use,original_word)

The output from above :

original word: Lion

Distractors: ['Cheetah', 'Jaguar', 'Leopard', 'Liger', 'Saber-toothed Tiger', 'Snow Leopard', 'Tiger', 'Tiglon']

Similarly, for the word cricket, which has two senses (one for insect and one for the game) we get different distractors for each depending on which sense we use.

# An example of a word with two different senses

original_word = "cricket"syns = wn.synsets(original_word,'n')for syn in syns:

print (syn, ": ",syn.definition(),"n" )synset_to_use = wn.synsets(original_word,'n')[0]

distractors_calculated = get_distractors_wordnet(synset_to_use,original_word)print ("noriginal word: ",original_word.capitalize())

print (distractors_calculated)original_word = "cricket"

synset_to_use = wn.synsets(original_word,'n')[1]

distractors_calculated = get_distractors_wordnet(synset_to_use,original_word)print ("noriginal word: ",original_word.capitalize())

print (distractors_calculated)

Output:

Synset('cricket.n.01') : leaping insect; male makes chirping noises by rubbing the forewings togetherSynset('cricket.n.02') : a game played with a ball and bat by two teams of 11 players; teams take turns trying to score runsoriginal word: Cricket

distractors: ['Grasshopper']original word: Cricket

distractors: ['Ball Game', 'Field Hockey', 'Football', 'Hurling', 'Lacrosse', 'Polo', 'Pushball', 'Ultimate Frisbee']

- Conceptnet is a free multilingual knowledge graph.

- It includes knowledge from crowdsourced resources and expert-created resources.

- Similar to WordNet, Conceptnet labels the semantic relationships among words. They are more detailed than Wordnet. Eg: is a, part of, made of, similar to, is used for, etc relations among words is captured.

Generating distractors using Conceptnet

Conceptnet is good for generating distractors for things like locations, items, etc which have a “Partof” relationship. For example, a state like “California” could be part of the United States. A “kitchen” could be part of a house etc.

Conceptnet doesn’t have a provision to disambiguate between different word senses as we discussed above with the example of the bat. Hence we need to go ahead with whatever sense Conceptnet gives us when we query with a given word.

Let’s see how we can use Conceptnet in our use case. We don’t need to install anything because we can use the Conceptnet API directly. Note that there is an hourly API rate limit so beware of it.

Given a word like “California” we query Conceptnet with it and retrieve all the words that share “Partof” relationship with it. In our example, “California” is part of the “United States”.

Now we go to “United States” and see what other things does it share a “Partof” relationship with. That would be other states like “Texas”, “Arizona”, “Seattle’ etc.

Similar to Wordnet we extract co-hyponyms using “partof” relationship for our query word “California” and we fetch the distractors “Texas”, “Arizona” etc.

Code for Conceptnet

Output:

Original word: CaliforniaDistractors: ['Texas', 'Arizona', 'New Mexico', 'Nevada', 'Kansas', 'New England', 'Florida', 'Montana', 'Twin', 'Alabama', 'Yosemite', 'Connecticut', 'Mid-Atlantic states']

Unlike Wordnet and Conceptnet the relationships between words in Sense2vec are not human-curated but automatically generated from a text corpus.

A neural network algorithm is trained with millions of sentences to predict a focus word given other words or predict surrounding words given a focus word. Through this, we generate a fixed size vector or array representation for each word. We call these word vectors.

The interesting fact is that these vectors capture associations among different kinds of words. For example, words of a similar kind in the real world fall closer in the vector space.

Also, relationships among different words are preserved. If we take the word vector of King, subtract the vector of Man and add the vector of Woman, we get back the vector of Queen. The real-world relationship of “what king is to a man, a queen is the same to woman” is preserved.

King — Man + Woman = Queen

Sense2vec is trained on Reddit comments. Noun phrases and named entities are annotated during training so multiword phrases like “natural language processing” also have an entry as opposed to some word vector algorithms which are trained with only single words.

We will use 2015 trained Reddit vectors as opposed to 2019 as the results were slightly better in my experimentation.

Code for Sense2vec

Install sense2vec from pip

!pip install sense2vec==1.0.2

Download and unzip Sense2vec vectors

Load sense2vec vectors from the unzipped folder on disk

# load sense2vec vectors

from sense2vec import Sense2Vec

s2v = Sense2Vec().from_disk('s2v_old')

Get distractors for a given word. Eg: Natural Language processing

Output:

Distractors for Natural Language processing :

['Machine Learning', 'Computer Vision', 'Deep Learning', 'Data Analysis', 'Neural Nets', 'Relational Databases', 'Algorithms', 'Neural Networks', 'Data Processing', 'Image Recognition', 'Nlp', 'Big Data', 'Data Science', 'Big Data Analysis', 'Information Retrieval', 'Speech Recognition', 'Programming Languages']

Hope you enjoyed how we solved a real-world problem of generating distractors (wrong choices) in MCQs using NLP.

If you would like a detailed video of the above blog as well as know more about the “Question generation using NLP” course that I am launching soon, please watch this video.

If you would like to get updates on more practical AI projects feel free to follow me on Linkedin or Twitter.

Happy Learning!