As the title says I am trying to find something about nature of primes using machine learning. For that, I am trying to build prime predictor. A predictor that just tells for a given number is it a prime or not. I am inclined to thing that the complexity of a function I need to forge needs to be something like the complexity of a analytical form of Riemann zeta function (not one expressed as series) as the famous unproven Riemann hypothesis (which includes Riemann zeta function) tells something about the nature of primes (if successful this might lead to contributing to the proof of the Riemann hypothesis).

As a predictor I am using plain full-connected pyramidal (one were every layer has less and less nodes) neural network. As a training and test set I am using numbers from 0 to N and the label for them is whether it is a prime or not.

In one version I used numbers from 0 to N for training and N to 2N for test and in the other 2 sets of random integers between 0 and N.

For the improvement of prediction I used varying number of nodes in the first layer (consequently in the other layers as the number of nodes in second and further layers are predicated on number of nodes in the first layer — halfing the number of nodes in every next layer).

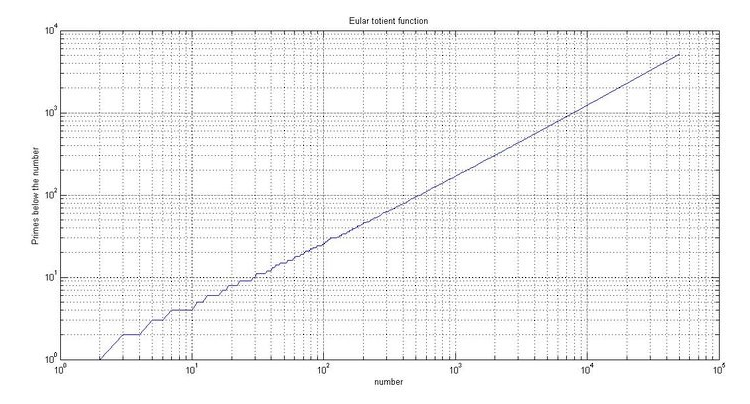

Also, I used different class weights as prime numbers are skewed class. As the number of primes below a certain number N is approximately N/ln(N) I used N – N/ln(N) as the weight for prime number class and N/ln(N) for the non-prime number class (it needs to be inversely proportional).

For all the other configuration parameters of learning procedure I used the default ones that Keras from actual Tensorflow gives (SGD, binary-crossentropy loss etc.).

Every time I got the trained predictor to tell that either all numbers on the test set are prime or that none is. Darn it. Every suggestion for the improvement of the predictor training learning procedure is welcome.

You can find the code for this on my github (https://github.com/lsamec/myProjects/blob/master/primesML.py).