This post aims to present the bias-variance trade-off through a practical example in Python.

The bias-variance trade-off refers to the balance between two competing properties of machine learning models.

The goal of supervised machine learning problems is to find the mathematical representation (f) that explains the relationship between input predictors (x) and an observed outcome (y):

Where Ɛ indicates noise in the data.

As an example, we create our synthetic x and y by choosing a sine wave as relationship between the two:

where { x ∈ R ∣ 0 < x < 2 }

We also assume a normally distributed noise, with mean=0 and variance=1.

In a real-world scenario, we would not know the relationship between predictors and outcome.

Given a dataset, our task is to find suitable candidate models and choose the one that better fits our information.

To this aim, we split our data in, at least, two distinct sets:

- Train set: the portion of data to fit the model.

- Test set: the portion of data to evaluate the model performances.

As the test set contains data not seen before by the model, assessing the performances on that set provides a better estimate of how the model would perform on real-world, unseen data.

We split our original data into train (80%) and test (20%) set as follows:

Now we want to train some models on the train set and assess their performances on the test set.

In particular, we want to minimize the error that the model makes when predicting the outcome from the predictors; such error is typically a measure of distance between the predicted outcome (y_predicted) and the observed outcome (y_observed), for all our observations (n).

In this case, we can use the Mean Square Error (MSE), defined as follows:

Moreover, we could prove that this error can be decomposed into the sum of three quantities:

Any model, however good, is an approximation of complex relationships between data, therefore the so called irreducible error is a component that cannot be avoided.

From the formula, it seems that a good model should minimize both bias and variance: let us define those terms.

Bias takes into account the difference between the model prediction and the real outcome.

It depends mainly on the model we choose to solve our problem, and how the model assumptions can suitably interpret the relationship between predictors and outcome.

By observing our train set plot, we notice a non-linear trend. If we chose to model the relationship between x and y with a linear regression, the model assumptions would clearly fail to explain our f, which we know to be non-linear. In this case, we would obtain a model with high bias.

We can empirically assess that a model suffers from high bias because it shows a high error on the train set. Or, in other words, a low training performance: this condition goes under the name of underfitting.

High bias:

– Model assumptions fail to explain the relationship between predictors and outcome.

– Involves “simpler” (less flexible) models, such as linear regression.

– Leads to underfitting (poor train set performances).

Variance measures how different choices of the train sets would affect the the model parameters.

When we train a machine learning model, we want that, by selecting a slightly different train set, the function would not change significantly. Otherwise, we would have obtained a model unable to generalize in front of small variations in the input data: this goes under the name of overfitting.

Intuitively, models with fewer assumptions do not suffer from high variance, as they do not change in front of small variations in the train set. Therefore, high variance is a problem that affects more flexible models, such as decision trees.

As the model is complex enough to overfit on a train set, we would expect high train performances (or low training error), but also poor test performances, as the model would require significant changes in the parameters to explain small variations in the data.

High variance:

– Involves more “complex” models (more flexible), such as decision trees.

– Leads to overfitting (poor test set performances).

The concept is summarized by this image:

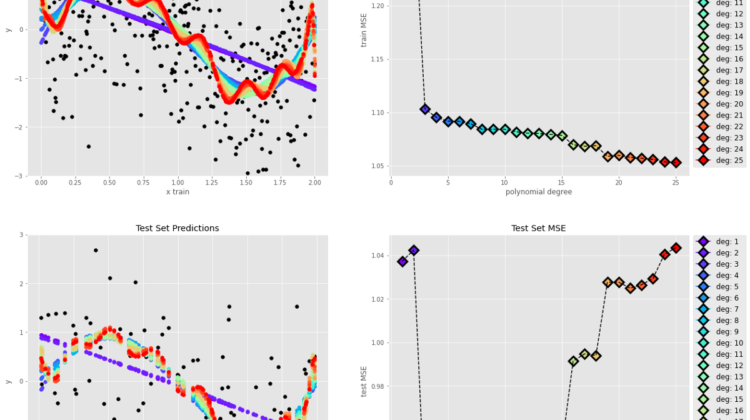

In order to illustrate these concepts in our example, we fit multiple polynomial models with increasing polynomial degree on the train set, and then observe the trend of the MSE over the increasing model complexity:

If we look at the MSE plots over the polynomial degree (model complexity) from left to right, the increasing complexity of the models leads to a decrease in the train error (lower bias), but also a significant increase in the test error (high variance).

On the other hand, we also notice that the decreasing complexity of the models results in a poor train set performance (high bias) as well as better generalization on the test set (low variance).

The best fit is represented by the polynomial degree that minimizes the test error:

In conclusion, the best fit shows two characteristics: it fits well the train set (low train set error) and it generalizes well on unseen data (low test set error), as it minimizes both variance and bias. In our example, models with higher error presented either high variance or high bias. Hence, the name bias-variance trade-off.