pyradox is a python library helps you with implementing various state of the art neural networks in a totally customizable fashion using TensorFlow 2

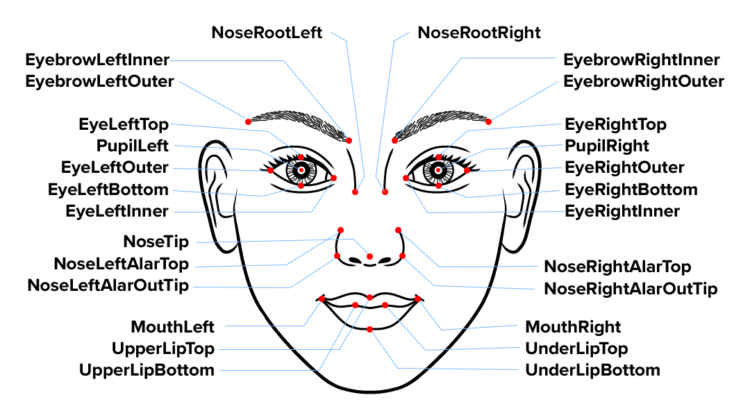

Facial Key-points are also called Facial Landmarks which are are used to localize and represent salient regions of the face, such as: Eyes, Eyebrows, Nose, Mouth, Jawline etc. Facial landmarks have been successfully applied to face alignment, head pose estimation, face swapping, blink detection and much more.

Given a set of face images, manually labelled, specifying specific (x, y)-coordinates of regions surrounding each facial structure, regression models are trained to estimate the facial landmark positions directly from the pixel intensities themselves

The end result is a facial landmark detector that can be used to detect facial landmarks in real-time with high quality predictions.

Detecting facial keypoints is a very challenging problem. Facial features vary greatly from one individual to another, and even for a single individual, there is a large amount of variation due to 3D pose, size, position, viewing angle, and illumination conditions.

For this problem we will be using Convolutional Neural Network(Mobile Net V3 Architecture), which is a class of deep neural networks, most commonly applied to analysing visual imagery.

For this task we will be using data from the ‘Facial Keypoints Detection’ Kaggle competition

The data set for this competition was graciously provided by Dr. Yoshua Bengio of the University of Montreal. James Petterson.

This article mainly focuses on building the Convolutional Neural Network Model, please refer to my kaggle notebook for complete detailed approach of processing the data.

For building the model we will require tensorflow and pyradox, so first lets install pyradox:

!pip install git+https://github.com/Ritvik19/pyradox.git

After installing pyradox lets proceed with the imports

from tensorflow.keras import Input, layers, callbacks

from tensorflow.keras.models import Model

from pyradox import convnets

Lets, start building the model, We will use Mobile Net V3 (small) for our task

inputs = Input(shape=(96, 96, 1))

x = layers.Convolution2D(3, (1, 1), padding='same')(inputs)

x = layers.LeakyReLU(alpha = 0.1)(x)

x = convnets.MobileNetV3(config='small')(x)

x = layers.GlobalAveragePooling2D()(x)

x = layers.Dropout(0.1)(x)

outputs = layers.Dense(30)(x)

model = Model(inputs=inputs, outputs=outputs)

Since we have grayscale images in our dataset, the inputs shape will be 96x96x1, although we will have to convert it into a 3 colour channelled image before feeding it to the mobile net. To solve this purpose we will use 3 convolution units with a kernel size of 1×1 activated though Leaky ReLu (0.1)

Once we have our 3 channelled image we will feed it to Mobile Net. To obtain the final results i.e. the location of the facial keypoints we will use global average pooling followed by dropout on the output of the Mobile Net before feeding it the final output layer.

Before feeding the batches of data to our model, Let’s first set up some callbacks:

We’ll use Early Stopping to prevent overfitting

es = callbacks.EarlyStopping(

monitor='loss', patience=30, verbose=1, mode='min', baseline=None, restore_best_weights=True

)

We’ll also use Reduce LR on Plateau to reduce the learning rate once learning stagnates to ensure the model does not gets stuck at some local minima

rlp = callbacks.ReduceLROnPlateau(

monitor='val_loss', factor=0.5, patience=5, min_lr=1e-15, mode='min', verbose=1

)

Now we’ll compile the model and start training

model.compile(

optimizer='adam', loss='mean_squared_error', metrics=['mae', 'acc']

)history = model.fit(

train_images, train_keypoints, epochs=150, batch_size=64,

validation_split=0.05, callbacks=[es, rlp]

)

Our model has worked pretty well for this task!!!

For more information Check these out:

Kaggle Notebook: https://www.kaggle.com/ritvik1909/facial-keypoint-detection-pyradox

Repository: https://github.com/Ritvik19/pyradox