A conceptual guide

Gaussian processes (GPs) are a flexible class of nonparametric machine learning models commonly used for modeling spatial and time series data. A common application of GPs is regression. For example, given incomplete geographical weather data, such as temperature or humidity, how can we recover values at unobserved locations? If we have good reason to believe the data is normally distributed, then a using a GP model could be a judicious choice. In what follows, we introduce the mechanics behind the GP model and then illustrate its use in recovering missing data.

Formally speaking, a GP is a stochastic process, or a distribution over functions. The premise is that the function values are themselves random variables. When modeling a function as a Gaussian process, we are making the assumption that any finite number of sampled points form a multivariate normal distribution.

Why is this assumption useful? It turns out that Gaussian distributions are nice to work with because of their tractability. Indeed, the Gaussian family is self-conjugate and enjoys a number of properties such as being closed under marginalization and closed on conditioning. As such, GPs naturally jibe with Bayesian machine learning, which usually involves specification of priors — or asserting one’s prior belief over hyperparameters — and marginalizing over parameters to obtain posterior predictive distributions.

Recall that the multivariate normal distribution is characterized by a mean vector and covariance matrix. Learning these quantities is central to making predictions using the GP model.

If we are interested in making predictions at test points based on observed training points, then we assume the following ansatz.

This reflects the previous assumption, but here we discern between train and test points, with the latter indicated by an asterisk. We also assume training labels are corrupted by Gaussian random noise, hence the modification of the top left block in the kernel matrix above. Using the properties of the normal distribution, we may compute the conditional distribution of the test data:

(See the GPML book for a complete derivation.) These equations allow us to obtain the predictive mean and covariances at the testing points, conditioned on the training data.

To employ the equations above, one must be able to model the covariances and cross-covariances between all combinations of train and test data. Roughly speaking, the covariance matrix encodes how the train and test values correlate with one another. To be able to explicitly form the kernel matrices, we must assume a certain kernel function for modeling the covariances. It is said that the kernel is at the heart of the GP model, and for good reason, because choosing the right kernel function is critical for achieving good modeling results.

The most commonly used kernel function is the radial basis function (RBF), which arises from taking the exponent of the scaled squared Euclidean distance between the data locations.

We form the kernel matrix between observed data, for example, by applying the kernel function to each pair of data locations (recall that each data point is associated with a location-value pair).

Note that the kernel function (and hence matrix) contained undetermined hyperparameters. In practice, one tunes these parameters by using MLE by optimizing the likelihood function, or probability of hyperparameters given the observed data. More specifically, we minimize the negative log likelihood (NLL) of the hyperparameters given the training labels:

Once the hyperparameters are optimized to one’s satisfaction, the kernel matrix can be plugged into the predictive equations for the mean and covariance of the test data to obtain concrete results.

The premise of GP regression is that we are modeling a function that is accessible at a finite number of points, yet we are interested in its values at unobserved locations. Rainfall is measured at geographically spaced out weather stations, ocean salinity is measured along certain trajectories, and the density of a certain species of trees in a forest is likewise sampled at fixed locations. At the core of GP modeling is finding a suitable kernel function for modeling the similarities between such data points distributed in space. Once the kernel function parameters are optimized using a training set, one can make predictions on the test set using the predictive equations.

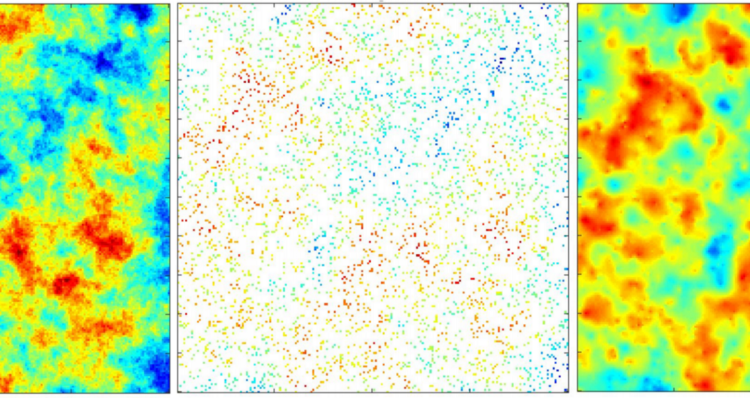

To illustrate this procedure, we generate a synthetic Gaussian random field, perform a train-test split, and use the GP model to make a prediction at unobserved locations.

Many software packages exist for GP regression! Implementations are available in popular languages such as Python, PyTorch, Matlab, and Julia.

A GP model is a nonparametric model which is flexible enough to fit many kinds of data, including geospatial and time series data. At the core of GP regression is the specification of a suitable kernel function, or measure of similarity between data points whose locations are known — this constitutes the model selection (MO) step. The tractability of the Gaussian distribution means that we can find closed-form expressions for the posterior predictive distribution conditioned on training data. Though well-established, GPs are an area of ongoing research: recent developments span multi-output GPs, variational inference for GP regression, and scalable GPs.