In industrial production activities, a common machine learning problem is how to train a model based on noisy sample labels for fault detection and classification.

First, explain what noisy sample label is. It refers to the case where the sample label used for training the model is not completely accurate, and the label of some samples is incorrectly labeled.

Regarding this question, in order to make it easier for everyone to understand, we take a successfully practiced project as an example, and explain how to achieve it with an example.

In large-scale industrial equipment, there is a type of electronic switches that are widely used. These electronic switches will gradually age and fail after being used for a long time, which will affect the operation of the equipment, so they need to be replaced.

The current common practice in the industry is to first perform a series of manual measurements, and then use experience to determine whether the electronic switch has been damaged, and replace the damaged electronic switch. The measurement data here is only used as a reference, in fact, there is no clear damage standard, and the operation is still based on experience.

For example, there are individual differences between people, and each person’s experience is different, which leads to the final judgment result not being the same.

It may cause the originally normal electronic switch to be judged as bad or about to be damaged, and replacing it will cause waste.

Similarly, it is also possible to judge a broken or about to be damaged electronic switch as a normal electronic switch and continue to use it, resulting in greater losses.

Therefore, if we can use machine learning technology to avoid errors or mistakes in human experience and improve the accuracy of judgment, we can bring great value to our customers.

So, we began to consider how to achieve it through machine learning?

For machine learning, the difficulty in solving this type of problem is that the label of the sample data is noisy or not completely accurate.

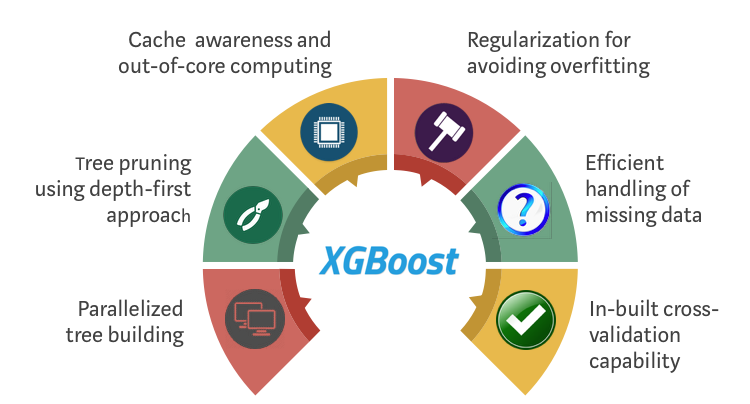

If you directly use these samples for training, no matter which classification algorithm we use (decision tree, logistic regression, or XGBoost, which is more popular in recent years), the final trained model will fit the noise sample better. “Model.

If we use such a model to judge and classify, the accuracy is difficult to surpass the experienced “veteran driver”, so such a model does not have much meaning.

So based on Z-Suite, what can we do?

Friends who are familiar with Z-Suite know that common algorithm models (classification, clustering, decision tree, neural network, correlation model, time series prediction, etc.) are built in our products. Graphical operations can be used through simple Drag and drop to quickly implement typical data mining algorithms.

In addition, it also has built-in R language support, providing powerful model extension capabilities.

Therefore, we use this ability to extend the algorithm implemented by the R language model to solve the problem of noisy sample label classification.

The algorithm we extend to implement is based on the idea provided by an MIT paper (link: https://arxiv.org/abs/1705.01936), combining it with the XGBoost classification algorithm.

The idea of this algorithm is as follows:

First of all, the sample data of the training model comes from historical measurement data, and the labels of the data are artificially judged based on experience, so misjudgment of labels may occur. In other words, the training model uses noisy sample data.

Then use conventional classification algorithms such as Logistic Regression, Bayes, SVM, XGBoost, etc. to train the classifier on the noisy sample data set;

The classifier gives a probability (0~1) to the predicted positive and negative labels. The greater the probability, the higher the reliability of the prediction as a positive label. The smaller the probability, the higher the reliability of the prediction as a negative label, and the probability is close to 0.5. The reliability of the predicted label of is not high, it may be noisy data;

Assuming that noisy data accounts for a small proportion of the data set, if the data with low credibility is eliminated, and the remaining relatively reliable data is used to train the classification model, the accuracy of the classifier will theoretically be improved.

The algorithm improves the quality of the remaining data by excluding unreliable (inconsistent) data in the training model, and uses these data for model training, thereby improving the accuracy of the model and effectively solving the model caused by noisy sample training Inaccurate question.

We applied the model to the above electronic switch test items, and compared it with the results of the second test of the electronic switch (the in-depth test of the replaced electronic switch, which can basically be regarded as the real label), and found that the prediction accuracy is from The 60%~70% of manual prediction has increased to about 80%, and the overall prediction accuracy has increased by nearly 20%. This has brought very obvious value to our customers, and customers appreciate our algorithm.

On this basis, we integrated the three models of Logistic Regression, Bayes and XGBoost as the basic model of the RankPruning algorithm. The test results show that the accuracy of the model is slightly improved by nearly 2% on the basis of XGBoost.

Machine learning may not be new in today’s Internet environment, but for traditional industries and manufacturing, technologies that can greatly improve efficiency and save costs can bring them value.

Similarly, we also feel that the real application of machine learning is not through concepts or ideas, but through practice. In the process of actually implementing machine learning technology and finding ways to create value for customers, we are also gradually entering a deeper level of understanding of machine learning.

We share this experience, the purpose is to help you to have a more scientific and accurate method for fault detection and classification based on noisy sample labels, and to provide you with some references for the application of machine learning in the field of big data. The case for value.