Hello everyone! Today, I want to share what I built over the weekend for the New Year New Hack hackathon! It was an amazing experience and a fun way to start the new year.

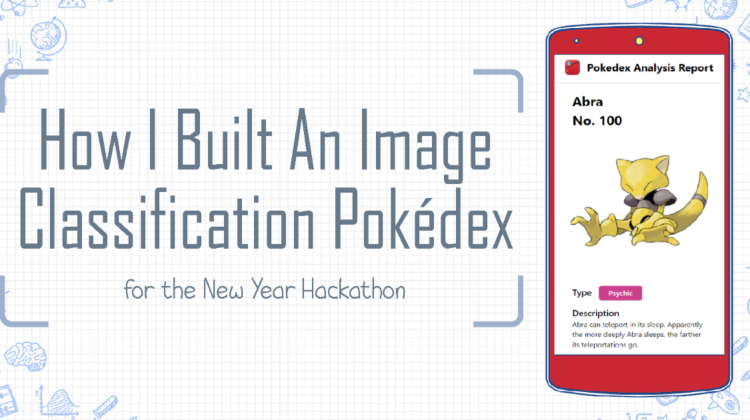

It is a web app that is able to identify the original 151 species of Pokémon using a custom image classification model. The user will first supply an image, it can be a 2D image or from a camera. Then, click on ‘Run Pokédex Analysis’. The model will return its prediction and then the app will fetch the data of that predicted Pokémon. The data is displayed onto the Pokédex Analysis Report page and the app will read aloud the Pokémon’s name, type and description.

On the web, a ‘Pokédex’ is just a website where users can search and view any Pokémon’s data. But in my childhood, a Pokédex refers to the cool electronic device that can recognize the Pokémon it is pointing to and returns that Pokémon information in a robotic voice. And so, the motivation behind this project was to make my childhood dream into reality.

1. Gathering Images

First, I had to build a custom image classification model, one that knows every 151 species. So I got down to business and start collecting Pokémon images.

I gathered various types of pictures, not just simple 2D images like this:

The model should recognize this is Pikachu.

But also ones that are from movies, games, cards, goods, etc.

The model should also recognize this is Pikachu.

In total, I had over 10,000 images of Pokémon for the 151 species that the model will learn. 10,216 to be exact.

2. AutoML Vision

Then, I used Google Cloud AutoML VIsion API to label each image to their appropriate Pokémon name.

After that, I started training the model. Due to the time limitations for this 48-hour hackathon, I could only train twice but both times achieve identical precision results, probably because I still use the same training data.

3. Export model

Once I’m satisfied with the model, I exported it as a Tensorflow.js package to use in the browser as a web app.

4. The App

Building the app is the simplest part of this project. My team and I use TailwindCSS for styling, Chart.js to display interactive charts and axios to fetch data from PokéAPI.

For our app to talk, we use Cloud Text-to-Speech API and ta-da! The first image classifying Pokédex is born!

Due to lack of time, some Pokémon species had more training data than others. While the app generally return correct results, it also had funny ones.

First, I tested with 2D images of Pokémon, the app returns and displays the correct Pokémon at almost 99% accuracy. So I decided to use camera images to test how truly accurate the model is.

Lucky for me, I had a few Pokémon objects lying around my house to serve as my testing data. The first tests was front-facing Pokémon plushes. Even with complex background behind, it classifies all of them accurately.

Images from left to right: the model correctly identifies Slowpoke, Vaporeon and Snorlax.

At off-angles and many items in the background, the model shows that it can still recognize some Pokémon, but not all.

Images from left to right: the model incorrectly identifies the Abra’s side view as an Alakazam, but correctly identifies Flareon’s side view as a Flareon.

Even for Pokémon with different colours, the model still proved pretty good accuracy.

In the picture taken above, the model correctly identifies Vulpix, even though its original colour is orange, not white.

Because the Text-to-Speech API requires authentication and an access token, our app will not have Text-to-Speech working if someone other than my team and I uses it.

A potential solution to this problem is to download all 151 Pokémon species’ audio files beforehand and just play the audio when that Pokémon’s data is displayed.

Currently, the model can only return a Pokémon, which means that even if the test image is not a Pokémon, it will return its best guess.

From left to right, Mario is classified as a Krabby and Koya is classified as a Squirtle even though they are non-Pokemon.

A next step for this app is to fix this issue by allowing the model to return ‘Not Pokémon’ if the confidence level for its best guess is below a certain threshold. That way, it won’t classify random things as Pokémon.

So what happens if a picture like the one below is supplied by the user?

The model will return the most apparent Pokémon in the image. In this case, Bulbasaur because he is in the middle.

This can be an issue if the user supplied a picture with many Pokémon in it. Hence, as a next step, it is essential to make the model recognize multiple Pokémon and maybe a return a message to re-take the photo, supply a new image or something.

The final step for this app is not an issue fix, but an expansion. A true Pokédex has to be a portable device you can carry around. So my team and I are not only planning to include more Pokémon in the model (there’s actually over 900 species of them), we are also planning to build a mobile app version with live camera detection.

That would really make any phone feel like a real Pokédex!

Ultimately, it’s all about having fun while learning something new. We are humbled that this project, that only started because of our childhood dream, has gotten us an astonishing 3rd place in the hackathon.

But this is just the beginning, to a developer’s journey to build something awesome every day. Thanks for reading, happy new year and cheers!