Text generation is one of the many breakthroughs of machine learning. This breakthrough comes in handy in the world of creative arts through song writing, poems, short stories and even novels. I decided to channel my inner Shakespeare by building and training a neural network that generates poems by predicting the next set of words from the seed text using LSTM. LSTMs are the go to models for text generation and its preferred over RNNs because of RNNs vanishing and exploding gradients problems.

I sourced the data from a combination of dialogues from Shakespearean novels spanning over 2500 lines of code and saving the file as a txt file. Next was to tokenize the file and create input sequences using list of tokens. I padded these sequences and created predictors and labels.

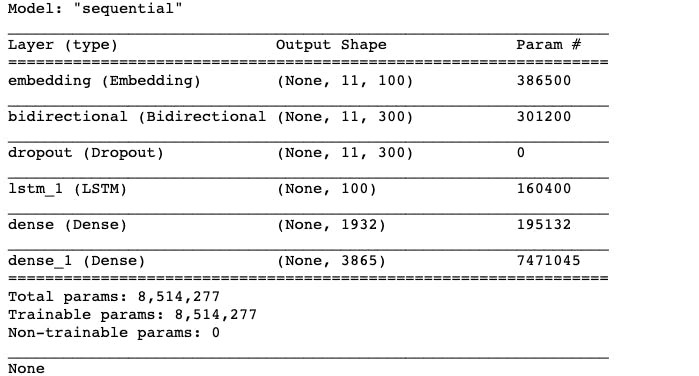

I built the neural network using Keras. I added an embedding layer, bidirectional LSTM, a dropout of 20%, an LSTM and two dense layer consisting of ReLu and softmax activations. I also added regularizer to prevent over-fitting. I compiled the model’s loss using categorical cross-entropy, Adam optimizer and ‘accuracy’ metrics was also used. Summary of the model is as seen below.

I trained the model using 100 epochs and got loss of 1.5040 and an accuracy of 0.7364. I plotted the model’s accuracy and loss using matplotlib.pyplot and it’s visualized below.

Finally, I inputted a seed text which will be the origin from which the poem will be generated and set the next words to 100. A visualization of the output of the generated poem.

Though some parts of the poem sound meaningless, the model can be tweaked to gain higher accuracy and predict more meaningful poems. The repo of this model can be found here and my LinkendIn profile for suggestions and corrections.