The closest things we have to an “AI”

The science of extracting information from textual data has changed to a dramatic extent in the past 10 years. As the term Natural Language Processing took over Text Mining as the name of this field, the methodology used has changed tremendously. One of the main drivers of this change was the forthcoming of language models, as a base for many applications aiming to distill something valuable from raw texts of various kinds.

I recently completed the Natural Language Processing Specialization on coursera created by the great deeplearning.ai team. One of the things I was fascinated by is the evolution of language models in the past years. I assume many of you heard about GPT-3 and the potential threats it poses. But how did we come this far? How can a machine produce an article that mimics a journalist assessing the quality of the text produced by the machine?

A language model is basically a probability distribution over words (or word sequences). Practically speaking, a language model gives the probability of a certain word sequence being “valid”. Validity here is not grammatical validity at all. It means that people speak (or to be more precise write) it — hence the language model can learn it. It is an important point here: there is no magic in a language model, (like other Machine Learning and particularly deep neural network based applications) it is “just” a great tool to incorporate abundant information in a concise manner that is reusable in out-of-sample context.

What can a language model do for us?

The abstract understanding of natural language — which is necessary to infer word probabilities from context — can be used for a number of tasks. Lemmatization or stemming aims to reduce a word to it’s most basic form thereby dramatically decrease the number of tokens. These algorithms work better if the part-of-speech tag of the word is known: a verb’s postfixes can be utterly different from a noun’s postfixes — hence the rationale for POS-tagging, a common task for a language model.

With a good language model, we can give extractive or abstractive summarization of texts. If we have models for different languages, a machine translation system can easily be built. Less straightforward use-cases include question answering (with or without context, see the example at the end of the article). Language models can also be used to speech recognition, OCR, handwriting recognition and more.There is a whole spectrum of opportunities.

Types of language models

It is important to note the difference between

a) probabilistic methods, and

b) neural network based modern language models.

A simple probabilistic language model (a) is built up by calculating n-gram probabilities (an n-gram being an n word term, n being an integer greater than 0). An n-gram probability is the conditional probability that the n-grams last word follows the specific n-1 gram (leaving out the last word). Practically, it is the proportion of occurences of the last word following the n-1 gram leaving the last word out. This concept is a Markov assumption.

There are evident drawbacks of this (a) approach. Most importantly, only the preceding n words have an effect of the probability distribution of a next word. Complicated texts have wide-spread context that may have decisive influence on the choice of the next word not being evident from the previous n-words, not even if n is 20 or 50 — think about a term having influence to a previous word choice: like the word United is much more probable if it is followed by States of America. Let’s call this the context problem.

On top of that, it is evident that this approach scales poorly: by growing n in the n-gram, the number of possible permutations skyrocket, nevertheless most of the permutations never occur in the text. And all the occuring probabilities (or all n-gram counts) have to be calculated and stored! In addition, not occuring n-grams creates the sparsity problem, as in the granularity of the probability distribution can be quite low (word probabilities have few different values therefore lot of the words have the same probability).

Neural network based language models (b) ease the sparsity problem by the way they encode input. Embedding layers create an arbitrary sized vector of each word that incorporates semantic relations as well. If you are not familiar with word embeddings, I suggest reading this article. These continous vectors create the much needed granularity in the probability distribution of the next word. Moreover, the language model is practically a function (as all neural networks are, with lots of matrix computations), consequently it is not necessary to store all n-gram counts to produce the probability distribution of the next word.

Even though the sparsity problem is solved, the context problem remains even with neural networks. Firstly, the way language models were developing was about solving the context problem more and more efficiently — bringing more and more context words to influence the probability distribution, and do so more efficiently. Secondly, the goal was to create an architecture that gives the model the ability to learn which context words are more important than others.

The first model, which I outlined previously is basically a dense- (or hidden-) and an output layer stacked on top of a Continous Bag-of-Words Word2Vec model (CBOW). A CBOW word2vec model is trained to guess the word from context (a Skip-Gram word2vec model does the opposite — guesses context from the word). So practically, it is trained by providing it with a lot of examples of the following setup: the inputs are n words before and/or after the word, which is the output. We can see, that the context problem is still intact.

RNNs

An improvement regarding this matter is the use of Recurrent Neural Networks (RNNs). If you’d like a thorough explanation of RNNs I suggest reading this article. Being either an LSTM or a GRU cell based network, it serves the purpose of taking all previous words into account when choosing the next word. For a further explanation on how RNNs achieve long memory please refer to this article. AllenNLP’s ELMo takes this notion futher by utilising a bidirectional LSTM, thereby all context before and after the word counts.

Transformers

The main drawback of these RNN based architectures stems from their sequential nature. As a result of that, for long sequences training times soar because there is no possibility for paralellization. The solution for this problem was the transformer architecture. It might be worth reading the original paper from Google Brain.

The GPT models from OpenAI and Google’s BERT are utilising the transformer architecture as well. You can read more about their architectures here: GPT-3, BERT. These models also employ a mechanism called Attention, by which the model can learn which inputs deserve more attention than others in certain cases.

In terms of model architecture, the main quantum leaps were firstly RNNs (specifically LSTM and GRU) solving the sparsity problem and making language models use much less disk space. Subsequently the transformer architecture making paralellization possible and creating attention mechanisms. But architecture is not the only aspect a language model can excel in.

Compared to the GPT-1 architecture, GPT-3 has virtually nothing novel. But it is huge. It has 175 billion parameters, and was trained on the largest corpus a model has ever been trained on: Common Crawl. This is partly possible because of the semi-supervised training strategy a language model has — from a text any words can be left out, and used as a training example. The incredible power of GPT-3 comes from the fact that it read more or less all text that appeared on the whole internet in the past years, and has the capability to entail most of the complexity natural language has.

Trained for multiple purposes

Finally, I’d like to show the T5 model from Google. Previously, language models were used for standard NLP tasks, like Part-of-speech (POS) tagging or machine translation with slight modifications. For example, with a little retraining, BERT can be a POS-tagger — because of it’s abstract ability to understand the underlying structure of natural language. Here is an implementation of it on GitHub.

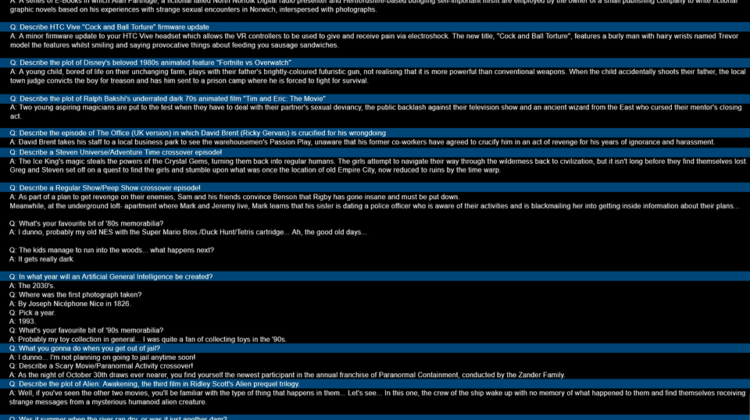

With T5, there is no need for any modification for NLP tasks. If it get’s a text with some <M> tokens in it, it knows that those tokens are gaps to fill with the appropriate words. It can also answer questions. If it gets some context after the questions, searches the context for the answer, if not, than answers from its own knowledge. Fun fact: in a trivia quiz, it has beaten it’s creators! The picture in the left shows some other use-cases as well.

Personally, I think this is the field where we are to closest to achieve creating an AI. There is a lot of buzz around this word and many simple decision systems or almost any neural network are called AI, but this is mainly marketing. According to Oxford University Press, or just about any dictionary, Artifical Intelligence is human-like intelligence capabilities performed by a machine. In fairness, transfer learning shines in the field of computer vision too, and the notion of transfer learning is essential for an AI system. But the very fact that the same model can do a wide range of NLP tasks, and it can infer what to do from the input is itself spectacular and brings us one step closer to actually creating human-like intelligence systems.