For the second year in a row, Gary Marcus, CEO and founder of Robust.AI and New York University Professor Emeritus, went live on the AI Debate series hosted by Montréal.AI. This time it was not to spar with Turing Award winner Yoshua Bengio, but to moderate three panel discussions on how to move AI forward.

“Last year, in the first annual December AI Debate, Yoshua Bengio and I discussed — what I think is one of the key debates in the last decade — are big data and deep learning alone enough to get to artificial general intelligence (AGI)?” said Marcus as he launched what he termed “the debate of next decade — how can we take AI to the next level?”

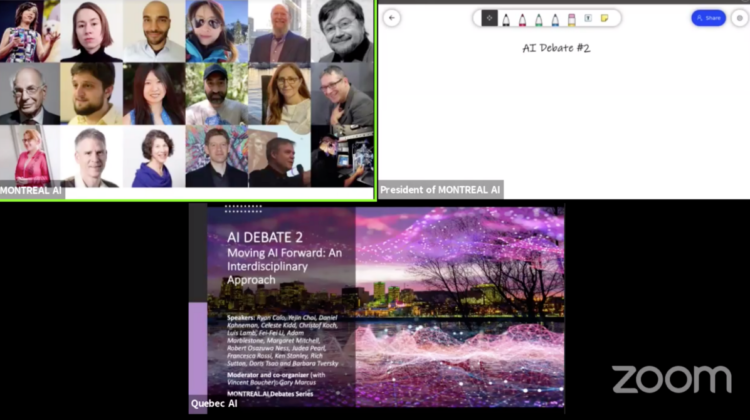

This year’s “AI Debate 2 — Moving AI Forward: An Interdisciplinary Approach” was held again on the day before Christmas Eve and featured 16 panellists — from leading AI researchers and practitioners to psychology professors, neuroscientists, and researchers on ethical AI. The four-hour event included three panel discussions: Architecture and Challenges, Insights from Neuroscience and Psychology, and Towards AI We Can Trust.

Fei-Fei Li kicked off the Architecture and Challenges panel with the presentation “In search of the next AI North Star.” Li is a researcher in Computer Vision and AI + Healthcare, a computer science professor at the Stanford University, co-director Stanford Human-Centered AI Institute, and cofounder and chair at AI4ALL.

Problem formulation is the first step to any solution, and AI research is no exception, Li explains. Object recognition as one critical functionality of human intelligence has guided AI researchers to work on deploying it in artificial systems for the past two decades or so. Inspired by the research on the evolution of human/animal nervous systems, Li says she believes the next critical AI problem is how to build interactive learning agents that use perception and actuation to learn and understand the world.

Machine Learning Researcher Luis Lamb, who’s also a professor of the Federal University of Rio Grande do Sul in Brazil, and Secretary of State for Innovation, Science and Technology, State of Rio Grande do Sul, Brazil, thinks the current key problem in AI is how to identify its necessary and sufficient building blocks, and how to develop trustworthy ML systems that are not only explainable, but also interpretable.

Richard Sutton, distinguished research scientist at DeepMind and a computing science professor at the University of Alberta in Canada, agrees that it’s important to understand the problem before offering solutions. He points out that AI has surprisingly little computational theory — it’s true in neuroscience that we’re missing a sort of higher-level understanding of the goals and purposes of the overall mind, and that’s also true in AI, he says.

AI needs an agreed-upon computational theory, Sutton explains, and he regards reinforcement learning (RL) as the first computational theory of intelligence, which is explicit about its goal — the whats and the whys of intelligence.

“It is well-established that AI can solve problems, but what we humans can do is still very unique,” says Ken Stanley, an OpenAI research manager and a courtesy computer sciences professor at the University of Central Florida. As humans exhibit “open-ended innovation,” AI researchers similarly need to pursue open-endedness in artificial systems.

Stanley emphasizes the importance of understanding what makes intelligence a fundamental aspect of humanity. He identifies several dimensions of intelligence that he believes are neglected: divergence, diversity preservation, stepping stone collection, etc.

Judea Pearl, Turing Award winner “for fundamental contributions to AI through the development of a calculus for probabilistic and causal reasoning” and director at the UCLA Cognitive Systems Laboratory, argues that next-level AI systems need added knowledge instead of remaining data-driven. This idea that knowledge of the world or common sense is one of the fundamental missing pieces is shared by Yejin Choi, an associate professor at the University of Washington who won the AAAI20 Outstanding Paper Award earlier this year.

The Insights from Neuroscience and Psychology panel had researchers from other disciplines share their views on topics such as how understanding feedback in brains could help build better AI systems.

The final panel, Towards AI We Can Trust, focused on AI ethics and how to deal with biases in ML systems. “Algorithmic bias is not only problematic for the direct harms it causes, but also for the cascading harms of how it impacts human beliefs,” says Celeste Kidd, a professor at UC Berkeley whose lab studies how humans form beliefs and build knowledge in the world.

Unethical AI systems are problematic because they can be embedded seamlessly in people’s everyday lives and drive human beliefs in sometimes destructive and likely irreparable ways, Kidd explains. “The point here is that biases in AI systems reinforce and strengthen biases in the people who use them.”

Kidd says “right now is a terrifying time for ethics in AI,” especially with the termination of Timnit Gebru from Google. She says “it’s clear that private interests will not support diversity, equity and inclusion. It should horrify us that the control of algorithms that drive so much of our lives remains in the hands of a homogeneous narrow-minded minority.”

Margaret Mitchell, Gebru’s co-lead at Google’s Ethical AI team and one of the co-authors of the paper at the centre of the Gebru controversy, introduced research she and Gebru were working on. “One of the key things we were really trying to push forward in the ethical AI space is the role of foresight, and how that can be incorporated into all aspects of development.

There’s no such thing as neutrality in algorithms or apolitical programming, Mitchell says. Human biases and different value judgements are everywhere — from training data to system structure, post-processing steps, and model output. “We were trying to break the system — we call it bias laundering. One of the fundamental parts of developing AI ethically is to make sure that from the start there is a diversity of perspectives and background at the table.”

This point is reflected in the format selected for this year’s AI Debate, which was designed to bring in different perspectives. As an old African proverb goes — “it takes a village to raise a child.” Marcus says it similarly would take a village to raise an AI that’s ethical, robust, and trustworthy. He concludes that it was great to have some pieces of that village gather together at this year’s AI Debate, and that he also sees a lot of convergence in what the panellists brought to the event.