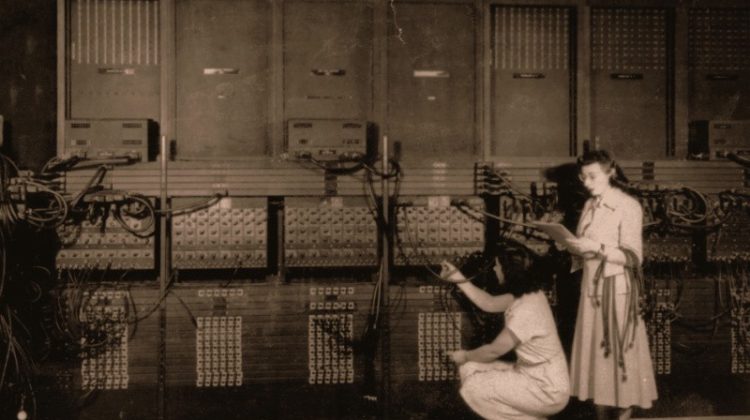

One way the machine learns.

The ability to forecast the unknown is a skill in demand. Consider the future. From a business perspective, to make effective decisions today, managers need to know what will likely happen next week, next quarter, or next year. The better we predict, the better we can plan, and the better we can prevent problems from occurring. The forecasted variable could be inventory level for a particular SKU, mortgage interest rate, shipment time, demand for a product, customer churn (loss), housing prices, employee turnover, population size, percentage of quality shipped parts, and many, many other possibilities.

How to create a forecast? Learn how variables with known values relate to the variable with the unknown value to forecast. The intrigue is that a machine can now learn these relationships.

A machine? The word machine applied here is a trendy, almost cute, but effective reference to programmed instructions running on a computer.

Machine: A computer that runs a procedure according to programmed instructions to accomplish a given task, such as learning.

People have always learned. Now machines can learn.

As applied here, learning proceeds from searching for patterns of related information from many existing examples of data. Except instead of you searching for these patterns from the data, the machine does the searching. And guess what? Given its staggeringly massive superiority in data processing speed, the machine can uncover some patterns much more effectively than can people. That is why we need the machine’s help.

Machine learning: Instruct the machine to identify patterns inherent in data.

For example, as your phone’s computer searches your stored photographs to find those that contain you, the color of your hair helps distinguish you from your friend.

Patterns apply to many types of content. A person’s height, for example, can predict, i.e., forecast, their weight, albeit, as with most prediction, imperfectly. These discovered relationship patterns provide the basis for a specific type of machine learning.

Supervised machine learning: Methods to develop the best possible forecast of a value of the variable of interest from the related pattern of values with other variables.

The machine expresses its learning as one or more equations that transform the related information into a forecast. Recently developed learning algorithms such as neural networks are complex, sometimes with thousands of equations from which to estimate weights. The most straightforward forecast naturally follows from a single equation, illustrated here.

Model: A prediction equation that computes the value of the forecasted variable from the values of other variables.

The prediction equation is a recipe that transforms a set of entered numeric values into a forecasted value, as illustrated in Figure 1.1.

To apply the equation, enter the related information, then compute the forecasted value.

Consider an example of an online clothing retailer who must correctly size the garment for a customer not present to verify fit. Returns annoy the customer and vaporize the profit margin for the retailer. Unfortunately, the customer sometimes omits from the online order form crucial measurements needed to fit a garment properly. If the customer omits their weight from the order, the retailer wishes to predict this unknown value from the measurements the customer did provide.

Both a person’s height and chest size relate to their weight. Leveraging these relationships, the retailer’s analyst first specifies a general form of the model. One popular choice is a model defined as a weighted sum of the variables, here height and chest size, plus a constant, what is called a linear relationship. What are the weights that optimize forecasting accuracy of a customer’s weight? That discovery is the machine’s job.

For simplicity, this initial model derived from the data analysis in this example applies only to their male customers.

Predicted Weight=3.80(Height)+7.32(Chest)−386.22

From examining the existing data values for each male customer’s height, chest size and weight, the machine learned the underlying weighted sum relationship. The machine estimated values of 3.80 and 7.32 for the respective weights of the two variables, plus the constant term of -386.22.

To apply the machine’s prediction model to a specific customer’s height and chest measurements, enter the corresponding data values, the measurements, into the equation in place of the variable names. Suppose the customer reports a height of 68 inches and a chest measurement of 35 inches, illustrated in Figure 1.2.

The forecasting model computes a specific number, the forecast, from the specific values for each of the variables entered into the prediction equation. For a person 66 inches tall with a chest measurement of 36 inches, forecasted weight is 128.10 lbs.

The forecasting process begins with the choice of variable to forecast.

Target: The variable with the values to forecast.

Generically refer to the target variable as y, which assumes a specific variable name in a specific application, such as customer weight in the previous example. In traditional statistics, refer to the same y as the response variable or dependent variable, among other names.

From what information does the forecast of the value of the target, y, proceed? In the online clothing retailer example, enter a customer’s height and chest size into the model to calculate the forecast.

Feature: One of the one or more variables from which to predict the value of the target variable.

Generically refer to the variables from which to compute the forecast as X, uppercase to denote that typically X consists of more than one feature: x1, x2, etc. Traditional references to the X variables include predictor variables, independent variables, or explanatory variables.

The variables of interest for supervised machine learning models span a wide variety of applications. Table 1.1 lists some business forecasting scenarios, each with three predictor variables. Some of the target variables are continuous, measured on a quantitative scale. The values of other target variables in Table 1.1 are labels, where each label defines a single category.

The many applications of machine learning contribute to its increasingly widespread use.

To begin the model development process, gather data for all the variables in the model. For computer analysis, organize the data into a table, such as in Figure 1.3, perhaps using a standard worksheet app such as Microsoft Excel, or the free and open-source LibreOffice Calc. List the data values for each variable in a single column. List the data values for each customer, in this example, in a single row.

Example: A row of data that contains the data values for the variables in the model for a specific person, company, region, or whatever the unit of analysis.

The ten rows of data shown in Figure 1.3, one row per customer, are illustrative only. In practice, provide many more examples from which the machine can learn. Also, be aware that the language for describing the data table is not always consistent. Other names for a data example, a row of data in a data table, include sample, instance, observation, and case.

After reading the data into your chosen machine learning application, such as provided by open-source and free R or Python, estimate the model with your chosen machine learning algorithm. Deriving the prediction equation(s) precedes actual forecasting. The purpose of this initial step is not to forecast as the values of y are already known from the data table. Instead, the (estimation algorithm implemented on the) machine learns the relationship between the X variables and the known values of y from the provided data.

Training data: Data from which the machine learns the relationships among all the variables in the model to construct a prediction equation.

To continue the trendy anthropomorphic wording, we want the machine to learn enough to fully understand whatever relationship exists between y and X. The resulting forecasting will not be perfect, but hopefully good enough to be useful.

The machine learns the patterns that relate the features to the target, expressed as a model. We inform the machine of only the general form of the model before learning begins. From this general form, such as a weighted sum in our online clothing retailer example, the machine analyzes the example training data to construct a specific model by estimating values of the weights. These weights optimize some aspect of forecasting accuracy, at least as it applies to the training data from which the model was derived.

We do not directly teach the machine.

The (estimation algorithm implemented on the) machine learns not by instruction, but by example.

The machine learning software provides the instructions for the machine to learn by analyzing the examples.

For each row of the training data, the learning algorithm relates the values of the features to the value of the corresponding target. The value of the target variable for any row of data is the correct answer, the value of y. The target variable provides the “supervision” that guides pattern discovery in the data related to it. Machine learning algorithms choose weights for the features so that, averaged across all the examples, the values of y computed from the model are as close to the actual values of y as possible.

Fitted value: Value of the target variable computed from the prediction equation, the model.

For each example, the comparison of this fitted value to the corresponding actual value becomes the basis for the machine learning the relationships.

Error: Difference between the actual value of the target variable, and the value of the target fitted by the model.

The fitted value from the training data is not a forecast. The learning algorithm must know the correct answer for each of many examples. On the contrary, forecasting applies to unknown values.

The machine generally discovers relationships by trial and error, applying a process called gradient descent. The algorithm examines each example of data to relate the X variables to y. The algorithm, based upon the form of the model decided by the analyst, then tries various specific values for the weights across a series of successive steps. It revises the weights at each step, always obtaining less overall error for all the examples at each successive step. At some point, the machine concludes it cannot find a set of weights that result in a meaningful amount of less error than already obtained, such as the weights 3.80, 7.32, and 386.22 in our clothing example.

There are many possibilities for the machine to learn from the data. Choose from many different:

- Types of models such as linear regression and non-linear regression, support vector machines, random forests, and neural networks.

- Solution algorithms to estimate the weights of the given model.

- Specific settings chosen for the solution algorithm, each of which can be tuned to potentially obtain better forecasting performance.

To learn how to do machine learning is to learn how to invoke these different types of models, estimation algorithms, and their associated settings, to derive the most effective forecasting model from a given set of data. An expert in machine learning understands all of these models and corresponding algorithms, how to implement them for computer analysis, how to best tune each procedure to obtain the best performance, and then ultimately deploy the learned model to the relevant forecasting setting. To attain a high level of expertise requires much practice and some mathematical training to better understand how the different procedures work for more effective tuning.

Perhaps other algorithms or functional forms or features would have lead to a more useful forecasting model in our online retail clothing example. Under the functional form imposed by the analyst, however, and given the chosen estimation algorithm, the resulting estimated weights — 3.80, 7.32, and -386.22 — minimize some function of the errors as applied to the training data.

Teachers test students on their learning. The analyst tests the machine on its learning. People, and now machines, can try to learn, but was the learning successful? Maybe the person or machine could not apply a successful learning strategy, or maybe there was nothing there to learn, randomness rather than structure.

To validate an obtained model, test what the machine has learned on new examples, on previously unseen data. What is the source of this unseen data?

Testing data: A split of the original data held back from model estimation to test how well the machine can forecast from new data.

The machine already knows the values of y for the training data. The analyst knows the values of y for each set of example values of X in the testing data, but not the machine. When the model is applied to generate real-world predictions, neither the machine nor the analyst knows the value of y. Hence, the need for a prediction, or forecast.

Forecast: The fitted value the model computed from the values of the features with an unknown value of y.

Splitting the original data into training and testing data sets is a fundamental concept of data science. As with the training data, define an error as the difference between the actual value of y, and the model’s fitted value, the comparison of what is with what the model specifies to be.

Figure 1.4 illustrates a true forecast, where the true value of the target varaible is unknown.

The need to evaluate the model with new data reveals an essential, but sobering, reality of machine learning. Eventually the unknown value of y will become known, and the associated error computed. The error from a forecast applied to new data, a true forecast, averages higher than the error from the model applied to the data from which the model was estimated, already knowing the answer.

The learning (estimation) process is biased in favor of its training data. The problem is that random variations across samples, sampling error, ensures that every sample is different.

Overfitting: The forecasting model fits the training data too well, reflecting random perturbations of the training data sample that do not generalize to new samples.

An overfit model can compute values of the target that nicely match the true values of the already known training data, and then fail miserably when forecasting new data. The goal is not to develop a model that minimizes the errors on the training data, but instead minimizes the errors on the testing data.

In pursuit of a continually better fit, the analyst may adjust and re-adjust the learning process and the model itself, running and revising the learning procedure over and over on the same training data. To assess forecasting accuracy by applying the model to the testing data is freedom. The analyst may freely tune the learning process with repeated modifications on the training data with one strong caveat: Test the final model on new data. A model cannot be properly tested on data for which it has been trained.

Model construction follows a general procedure. Regardless of the specific model and the particular implementation of a learning algorithm, the overall forecasting process remains the same.

- Prepare: Gather, clean, transform data as needed, then split the data.

- Learn: Train the model on some of the data.

- Validate: With the rest of the data, calculate the forecasted values and match to the true values.

- Deploy: If validated with sufficient accuracy, apply the model to real-world forecasting.

Our focus here has been Steps 2 and 3, the learning phase and the subsequent testing phase. What may be the easiest way to implement some machine learning algorithms is with the functions Regression(), for forecasting a continuous target variable, and Logit(), for forecasting a categorical target variable. Find these functions in my lessR package for the R data analysis system. To use these functions requires no programming expertise in R, Python, or anything else. Download the needed software, then just follow the following simple function calls to train and validate a model.

Run R by itself, or within RStudio.

Download R. Choose Windows, or Mac (click on the link several paragraphs down the page, left-hand margin).

(Optional) Download RStudio. Choose the first option, RStudio Desktop, Open Source License.

To access functions from the lessR package, first download those functions from the official R servers. One time only, when running R, enter the following to download.

install.packages("lessR")

You will download the lessR functions and data sets, plus several dependent packages, all automatically.

From then on, to begin each R session load the lessR functions with the library function.

library("lessR")

In this example, read the data from one of the built-in lessR data sets, named Employee — handy if you want to have some fun, need some data, and are off the Internet. To read any Excel or csv (or SPSS or SAS) data set from the web, simply insert the full URL between the quotes in the lessR Read() function. To browse for a data file on your computer system, just enter the two quotes with nothing in-between. Or enter a path name.

Here read the Employee data into the d data frame, which is the default data frame for the lessR analysis functions. (To see more detail regarding this data set, see my medium article on constructing bar charts with lessR.)

d = Read("Employee")

The Read() function displays all the variable names, sample data values for each variable, and more information. The Employee data set contains variables Salary for annual salary in USD, Years worked at the company, and Pre, a score on a pre-test before some skill-based training was implemented. Here use Years and Pre as features to forecast the target Salary.

Regression(Salary ~ Years + Pre, kfold=3)

The result is a complete regression analysis, with parameter estimates and corresponding hypothesis tests and confidence intervals, various fit indices, data visualizations, forecasted values, an outlier analysis, and multi-collinearity analysis. Perhaps this is the most complete analysis available with such a simple function call available in the R and Python software ecosystems.

The kfold parameter specifies for the analysis of three different randomly selected training/testing data sets, what is called cross-validation. The average value of R-squared across the three training sets provides a summary of the fit on the testing data sets.

Apply the Logit() function similarly, though the kfold option is not yet available. The function does, however, provide the confusion matrix for analysis of classification errors into the given categories, along with several classification fit indices. In this example, predict Gender from the value of Salary.

Logit(Gender ~ Salary)

Three lines of code for doing machine learning with one of two classic learning (estimation) algorithms, traditional least-squares regression analysis or traditional logistic regression for forecasting categories. If you wish to become more expert in machine learning, probably the best way forward is to explore the scikit-learn package in the Python data ecosystem, which provides a wide range of learning algorithms. But these two lessR functions provide the easiest way to get started, and their estimation algorithms, although simpler than more recently developed methods such as neural networks, are often sufficiently, if not surprisingly, robust.

To learn more about lessR, check out my 2020 book from CRC Press, R Visualizations: Derive Meaning from Data.