History of computer vision

Early experiments in computer vision took place in the 1950s, using some of the first neural networks to detect the edges of an object and to sort simple objects into categories like circles and squares. In the 1970s, the first commercial use of computer vision interpreted typed or handwritten text using optical character recognition. This advancement was used to interpret written text for the blind.

As the internet matured in the 1990s, making large sets of images available online for analysis, facial recognition programs flourished. These growing data sets helped make it possible for machines to identify specific people in photos and videos.

Today, a number of factors have converged to bring about a renaissance in computer vision:

The effects of these advances on the computer vision field have been astounding. Accuracy rates for object identification and classification have gone from 50 percent to 99 percent in less than a decade and today’s systems are more accurate than humans at quickly detecting and reacting to visual inputs.

Source: sas.com

A new era of cancer treatment

Traditional methods of evaluating cancerous tumors are incredibly time consuming. Based on a limited amount of data, such methods can lead to mistakes and they’re prone to subjectivity. Working with SAS, Amsterdam UMC has transformed tumor evaluations through artificial intelligence. Using computer vision technology and deep learning models to increase the speed and accuracy of chemotherapy response assessments, doctors can identify cancer patients who are candidates for surgery faster, and with lifesaving precision.

Source: sas.com

Computer vision in today’s world

From recognizing faces to processing the live action of a football game, computer vision rivals and surpasses human visual abilities in many areas.

Source: sas.com

Deep learning and computer vision

How does deep learning train a computer to see? Learn how the different types of neural networks work and how they are used for computer vision.

The Evolution Of Computer Vision

Before the advent of deep learning, the tasks that computer vision could perform were very limited and required a lot of manual coding and effort by developers and human operators. For instance, if you wanted to perform facial recognition, you would have to perform the following steps: Create a database : You had to capture individual images of all the subjects you wanted to track in a specific format. Annotate images : Then for every individual image, you would have to enter several key data points, such as distance between the eyes, the width of nose bridge, distance between upper-lip and nose, and dozens of other measurements that define the unique characteristics of each person. Capture new images : Next, you would have to capture new images, whether from photographs or video content. And then you had to go through the measurement process again, marking the key points on the image. You also had to factor in the angle the image was taken.

After all this manual work, the application would finally be able to compare the measurements in the new image with the ones stored in its database and tell you whether it corresponded with any of the profiles it was tracking. In fact, there was very little automation involved and most of the work was being done manually. And the error margin was still large.

Machine learning provided a different approach to solving computer vision problems. With machine learning, developers no longer needed to manually code every single rule into their vision applications. Instead they programmed “features,” smaller applications that could detect specific patterns in images. They then used a statistical learning algorithm such as linear regression, logistic regression, decision trees or support vector machines (SVM) to detect patterns and classify images and detect objects in them.

Machine learning helped solve many problems that were historically challenging for classical software development tools and approaches. For instance, years ago, machine learning engineers were able to create a software that could predict breast cancer survival windows better than human experts. However building the features of the software required the efforts of dozens of engineers and breast cancer experts and took a lot of time develop.

Source: towardsdatascience.com

How Long Does It Take To Decipher An Image

In short not much. That’s the key to why computer vision is so thrilling: Whereas in the past even supercomputers might take days or weeks or even months to chug through all the calculations required, today’s ultra-fast chips and related hardware, along with the a speedy, reliable internet and cloud networks, make the process lightning fast. Once crucial factor has been the willingness of many of the big companies doing AI research to share their work Facebook, Google, IBM, and Microsoft, notably by open sourcing some of their machine learning work.

This allows others to build on their work rather than starting from scratch. As a result, the AI industry is cooking along, and experiments that not long ago took weeks to run might take 15 minutes today. And for many real-world applications of computer vision, this process all happens continuously in microseconds, so that a computer today is able to be what scientists call “situationally aware.”

Source: towardsdatascience.com

Recent developments

Recent developments in deep learning approaches and advancements in technology have tremendously increased the capabilities of visual recognition systems. As a result, computer vision has been rapidly adopted by companies. Successful use-cases of computer vision can be seen across the industrial sectors leading to widening the applications and increased demand for computer vision tools.

Now without losing more time, let’s jump into the 5 exciting applications of computer vision.

Human Pose Estimation

Human Pose Estimation is an interesting application of Computer Vision. You must have heard about Posenet , which is an open-source model for Human pose estimation. In brief, pose estimation is a computer vision technique to infer the pose of a person or object present in the image/video.

Before discussing the working of pose estimation let us first understand ‘Human Pose Skeleton’. It is the set of coordinates to define the pose of a person. A pair of coordinates is known as the limb. Further, pose estimation is performed by identifying, locating, and tracking the key points of Humans pose skeleton in an Image or video.

source:https://www.researchgate.net/publication/338905462_The_’DEEP’_Landing_Error_Scoring_System The following are some of the applications of Human Pose Estimation-

Activity recognition for real-time sports analysis or surveillance system.

For Augmented reality experiences

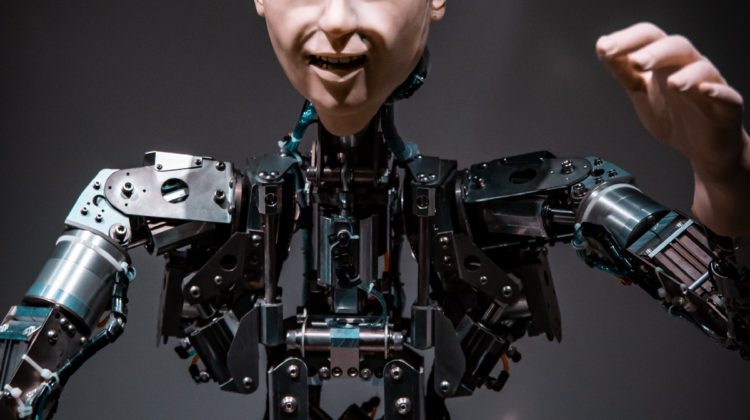

In training Robots

Animation and gaming

The following are some datasets if you want to develop a pose estimation model by yourself- MPII COCO keypoint challenge HUMANEVA I found DeepPose by Google as a very interesting research paper using deep learning models for pose estimation. For digging deeper you can visit multiple research papers available on the pose estimation

Source: analyticsvidhya.com

Image Transformation Using GANs:

Faceapp is a very interesting and trending application among the people. It is an image manipulation tool and transforms the input image using filters. Filters may include aging or the recent one gender swap filter.

source:https://comicbook.com/marvel/news/marvel-men-faceapp-gender-swap/#18 Look at the above image, funny right? A few months ago it was a hot topic on the internet. People were sharing images after swapping their gender. But what is the technology working behind such apps? Yes, you guessed it correctly it’s Computer Vision, to be more specific its Deep convolution generative adversarial networks.

Generative adversarial networks popularly known as GAN is an exciting innovation in the field of computer vision. Although GANs is an old concept, in the present form it was proposed by Ian Goodfellow in 2014. Since then it has seen a lot of developments.

The training of GANs involves two Neural nets play against each other, in order to generate new data based on the distribution of the given training data. Although originally proposed as an unsupervised learning mechanism GANs has proven itself a good candidate for supervised as well as semi-supervised learning.

Source: analyticsvidhya.com

Source: sas.com