This tutorial is on the basics of linear regression. It is also a continuation of the Intro to Machine Learning post, “What is Machine Learning?”, which can be found here.

Linear regression is one of the fundamental tools for data scientists and machine learning practitioners. We reference the equation y = mx + b in the “What is Machine Learning?” post, which can be thought of as the basis of linear regression. Linear regression models are highly explainable, quick to train, can provide insights into your data, and be further optimized to make better predictions. If you’re just starting out with data science or machine learning, it’s a good idea to dive into linear regression to get an understanding of how data can be estimated using functions.

The formula is very similar! Instead of y = mx + b, with Linear Regression we typically write the equation as:

Or without the multiplication symbols:

Don’t worry, it’s not as complicated as it may look!

What is y?

We still have y just like in y = mx + b, which is the output. This is the y-axis value you care about and want to find what columns or features impact it.

What is β0?

We also have β0, pronounced “Beta Not”, and think of this as the y-intercept which is found as b in y = mx + b.

What about the βx stuff?

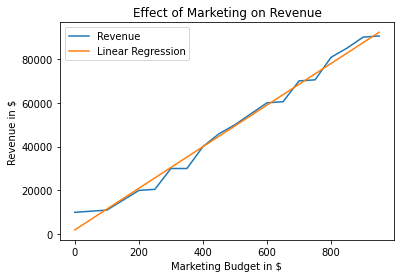

Now that you know we have y and b from y = mx + b that leaves us with mx. If you guessed, this is what we are seeing with the βx’s or the Betas with their corresponding x’s. The Betas are like the slopes or m in y = mx + b. The x’s are the inputs from the data. An example of x can be marketing dollars you put into a project and then you want y to tell you approximately how much revenue you get out of it. Since in linear regression and machine learning we typically have more than one x or column of data to help us determine its relationship with y, we also have many Betas to pair with those many x‘s. This would be like if you had multiple mx‘s in your y = mx + b equation.

So what are the Betas exactly?

As for β these are what are called the coefficients. These are very important and essentially explain your model. These are the weights and learned values from linear regression which tell you how much each feature or column impacts your prediction or y-axis variable, and by how much.

Okay, then what’s that last greek letter ε at the end?

That greek letter is epsilon. This essentially says you will have some unexplained error in your model. Another way of putting it is that you probably won’t be able to explain everything going on in your data with this short equation, so we throw ε there at the end to signify that there is just some error or noise going on we can’t explain right now.

For this example, we really just need Scikit Learn. If you aren’t familiar with Scikit Learn, it is one of the most popular Python libraries to run machine learning algorithms. I am going to create some fake sample data about housing prices. I will be creating the columns for number of bedrooms, number of bathrooms, the square footage, and then for the price of the house. In this example think of the price of the house as the y-axis or y and all of the other columns as the multiple x‘s in our equation. After running the data through a Linear Regression model, we should see how they impacted the price of the house.

Note: I will generate some sample data and use Numpy to store the data in an object. This isn’t specifically needed to run Linear Regression, but are used just for the sake of the example.

Here we are importing the libraries Numpy and Scikit Learn. Numpy is used to create data structures, while scikit learn is used for a lot of machine learning use cases.

# Import numpy to create numpy arrays

import numpy as np# Import Scikit-Learn to allow us to run Linear Regression

from sklearn.linear_model import LinearRegression

The data we have created here is some fake sample data on housing prices and their respective features associated with number of bedrooms, bathrooms, and its square footage.

Note: These prices are represented in 100k. So 150 really means 150k or 150,000 dollars instead of just 150 dollars.

# Creating sample data

# Price is represented in 100k dollars

price = np.array([150, 500, 225, 975, 735, 950, 325, 680, 220, 330])

beds = [2, 4, 3, 5, 4, 5, 3, 4, 2, 3]

baths = [1, 2, 2, 3, 2, 3, 2, 2, 1, 2]

sq_ft = [1100, 2800, 1500, 4800, 3500, 5000, 2200, 3100, 1650, 2000]

These two lines are to shape the data properly to use with scikit learn. We stack the multiple arrays (or the x’s) into one numpy object. While also specifying the “shape” of the price array.

# Combining the multiple lists into one object called "X"

X = np.column_stack([beds, baths, sq_ft])# Reshaping the price list to work with scikit learn

y = price.reshape(-1, 1)

Now we can create the shell of the linear regression model using this line below.

# Instantiating the model object

model = LinearRegression()

Once we have created the shell, we need to fit the model with data. This is how we can get the Beta values and find some function that can approximate a house’s price, given its number of bedrooms, bathrooms, and its square footage.

# Fitting the model with data

fitted_model = model.fit(X, y)

We have trained a linear regression model! Now let’s take a look at the coefficients and decipher what they mean.

The coefficient, or the beta one value, for number of bedrooms = 115.55210418237826The coefficient, or the beta two value, for number of bathrooms = -112.47160198590979The coefficient, or the beta three value, for square footage = 0.18603883444673386The y-intercept, or the 'beta not' value =

-184.8865379117235

Let’s start with the y-intercept a.k.a β0 or “Beta Not”.

This value is approximately -184.89, so what does this mean? Well it means that if we had 0 bedrooms, 0 bathrooms, and 0 square footage (0’s across the board) we’d end up with a negative price of -184k for a house. Now obviously this is unrealistic, but remember a model like this doesn’t have an understanding of how housing prices work. Really just think of this as the y-intercept, whether or not it makes sense in this case.

Next lets look at the other coefficients.

Starting with number of bedrooms, the coefficient value is ~115.56. This means that if the number of bedrooms increased by one (and the other inputs did not change) the price of the house will increase by ~115k dollars, on average. This is the same with the square footage coefficient, except the price would increase by ~0.19k dollars on average if square footage were to increase by one square foot. Think of the coefficients as the slope values or m in y = mx + b. As x increases, the slope is ~115.56, in the case of bedrooms, and increases the y-axis by this much.

What’s going on with the negative coefficient and bathrooms?

This is a great question. What this means is that for every increase in number of bathrooms, the model expects the price to drop by ~112k dollars. That’s why we see a negative value. Does this make sense? Not really. However, this was a good sample problem to show you can have negative coefficients and how we can interpret them. In machine learning we also have to be wary of collinearity, or when multiple features are extremely correlated with one another. Also, more data helps with underfit machine learning models.

Some next steps on how to make your model better include:

- Adding more rows of data

- Normalize your data. Scale each column between 0 and 1 or between -1 and 1 to help the model. It makes learning difficult for the model when you don’t scale your data since it could weigh features, that just have higher values naturally, more than others.

- Regularization (this attempts to use features or inputs that impact the model more, while weighing others lower) with Lasso or Ridge regression.

- Finding more features or columns to use.

- Look into Additive models or other Regression models.

I hope this tutorial was informative and you can take away learnings about linear regression. Linear Regression is a fundamental tool in any Data Scientist’s tool belt. You can look at coefficients and attempt to learn what data is impacting your model. You can use Linear Regression as a baseline tool to determine if other models may be better. Linear Regression is quick to train and doesn’t require a lot of data. Although, in many cases it can be too simple. Therefore, don’t count out more complex methods or additional methods to make your linear model better!