One of the important and fundamental topics in deep learning technology!!!

Before moving to tensor let us see what Deep Learning is?

Deep learning is a subset of machine learning. It is a field that is based on learning and improving on its own by examining computer algorithms. While machine learning uses simpler concepts, deep learning works with artificial neural networks, which are designed to imitate how humans think and learn. We all know TensorFlow and PyTorch libraries are deep learning frameworks.

What is a tensor in a deep learning framework?

Tensors are the data structure used by machine learning systems, and getting to know them is an essential skill you should build early on.

A tensor is a container for numerical data. It is the way we store the information that we’ll use within our system.

Three primary attributes define a tensor:

- Rank

- Shape

- Data type

Let us in detail about three primary attributes.

Here the rank of a tensor refers to the tensor’s number of axes.

Examples:

The rank of a matrix is 2 because it has two axes.

The rank of a vector is 1 because it has a single axis.

The shape of a tensor refers to the number of dimensions along each axis.

Example:

A square matrix may have (2, 2) dimensions.

A tensor of rank 3 may have (3, 5, 8) dimensions.

The data type of tensor refers to the type of data contained in it.

Here are some of the supported data types:

- float32

- float64

- uint8

- int32

- int64

Now let me describe tensors using the mathematical concept.

A scalar(0D tensor) has rank 0 and contains a single number. These are called 0 dimensional tensors.

The below image shows how to construct a 0D tensor using NumPy.

A vector(1D tensor) has rank 1 and represents an array of numbers.

The below image shows a vector with a shape (4, ).

A matrix(2D tensor) has rank 2 and represents an array of vectors. The two axes of a matrix are usually referred to as rows and columns.

The below image shows a matrix with a shape (3, 4).

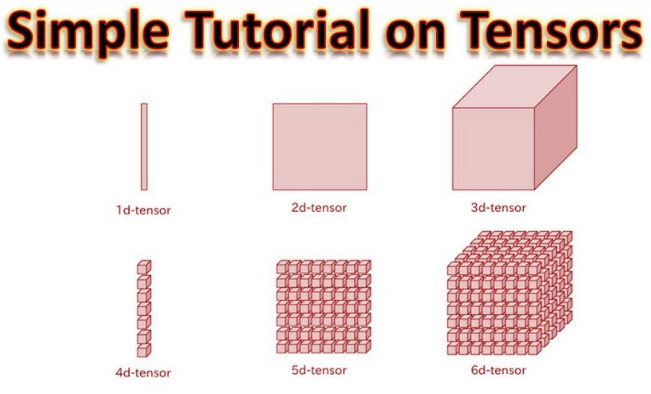

You can obtain higher dimensional tensors (3D, 4D, etc.) by packing lower-dimensional tensors in an array. For instance, packing a 4D tensor in an array gives us an 8D tensor.

Here are some common tensor representations:

Vectors: 1D — (features)

Sequences: 2D — (timesteps, features)

Images: 3D — (height, width, channels)

Videos: 4D — (frames, height, width, channels)

Generally, machine learning algorithms deal with a subset of data at a time called batches.

When using a batch of data, the tensor’s first axis is reserved for the size of the batch (number of samples.)

For example, if your handling 2D tensors (matrices), a batch of them will have a total of 3 dimensions:

White small square (samples, rows, columns)

Notice how the first axis is the number of matrices that we have in our batch.

Following the same logic, a batch of images can be represented as a 4D tensor:

- White small square (samples, height, width, channels)

And a batch of videos as a 5D tensor:

- White small square (samples, frames, height, width, channels)

I hope you completely understand the tensor concept. If still, you have any question don’t hesitate to contact me balavenkatesh.com