Managing complex ML systems at scale

Machine Learning (ML) IT Operations (Ops) aims to apply the engineering culture and practices promoted by DevOps to ML systems. But why?

- Creating an ML model is the easy part — operationalising and managing the lifecycle of ML models, data and experiments is where things get complicated. Indeed, more than 87% of data science projects never make it into production [1]

- AI-driven organisations are using data and machine learning to solve their hardest problems and are reaping huge benefits. But ML systems have a capacity for creating technical debt if not managed properly

Data science and ML are becoming the table stakes for solving complex real-world problems, transforming industries, and delivering value in many different domains. Recent progress in the field during the last 5–10 years, have accelerated more mainstream adoption of ML systems, because the following requisite ‘ingredients’ are far more accessible:

- Large datasets

- Inexpensive on-demand compute resources

- Specialised accelerators for ML on various cloud platforms

- Rapid advances in different ML research fields (such as computer vision, natural language understanding, and recommendations AI systems)

Therefore, many businesses are investing in their data science teams and ML capabilities to develop predictive models that can deliver business value to their users.

Data scientists can easily build an ML model on a local machine that performs perfectly well when running in isolation. This means that the real challenge isn’t in building an ML model, it is building an integrated ML system and to continuously operate it in production. As shown in the diagram below, ML code is just one small component of what is required to run an ML system.

It’s the ‘other 95%’ of required surrounding components in the diagram that are vast and complex. To develop and operate complex systems like these, you can apply DevOps principles to ML systems (MLOps).

There are many factors that make deploying ML models at scale very challenging, but in a nutshell:

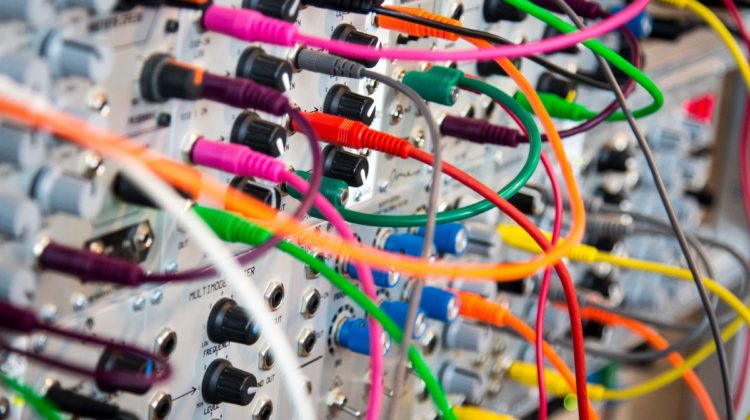

- CODE — Catering for the different ML libraries, frameworks and programming languages used to build the models. They don’t always interact well with each other, variations in package versions and other interdependencies can break your pipeline. Modern containerisation technologies such as Kubernetes can solve portability and compatibility issues when moving code into a production environment, but reproducibility can also be a challenge. Data scientists may build many versions of a particular model, each with varying programming languages, libraries (or different versions of the same library) — it is difficult to track these changes manually.

- COMPUTE — The process of training, re-training, and serving predictions from ML models can be very computationally intensive. To serve at speed in production requires expensive hardware that can require a huge capital investment. Cloud based services can begin to address the economics and ability to scale quickly when there are spikes in demand. But there is still a need to maintain model performance and accuracy.

Technical debt is a complex topic in its own right and applies more broadly to IT systems in general. But I’ll attempt to give you a ‘10,000 foot view’ here and how it relates to ML systems.

In simple terms technical debt is a phenomenon that can occur unintentionally over time resulting in increased engineering cost, which can negatively impact product development velocity, quality, and engineering team satisfaction. It can be introduced into systems (different versions or its composite parts) because the way they are implemented results in:

- Lower quality compared to the objective of the system,

- Extra complexity compared to the objective of the system,

- Extra latency compared to the objective of the system,

- or any combination of the above

ML systems can exacerbate technical debt because they combine traditional IT systems where behaviour is defined by code and also data. For example, ML model performance is heavily dependent on the data they are trained on. Left unchecked, changes in training data can create a dependency that carries a similar capacity for building debt that can also arise from code complexity. To make things worse, it can be much more challenging to pinpoint data dependencies than in code where there are well defined methods to do so.

While there is no silver bullet for overcoming the difficulties of ML system deployment, and simultaneously reducing the burden of technical debt, building a culture of MLOps in your organisation is a great starting point.

Like DevOps, MLOps is an ML engineering culture and practice that aims at unifying ML system development (Dev) and ML system operation (Ops). Unlike DevOps, ML systems present unique challenges to core DevOps principles like Continuous Integration and Continuous Delivery (CI/CD).

Key ways in which ML systems differ from other software systems:

- Continuous Integration (CI) is not only about testing and validating code and components, but also testing and validating data, data schemas, and models.

- Continuous Delivery (CD) is not only about a single software package or a service, but a system (an ML training pipeline) that should automatically deploy another service (model prediction service).

- Continuous Training (CT) is a new property, unique to ML systems, that’s concerned with automatically retraining candidate models for testing and serving.

- Continuous Monitoring (CM) is not only about catching errors in production systems, but also about monitoring production inference data and model performance metrics tied to business outcomes.

Practicing MLOps means that you advocate for automation and monitoring at all steps of ML system construction, including integration, testing, releasing, deployment and infrastructure management.

The objective of this post was to explain the ‘why’ and ‘what’ of MLOps. If any of the challenges described in this post have resonated, you might be thinking about ‘how’ you deploy ML systems in your organisation, here are some sensible jumping off points:

- Seriously consider a Cloud first strategy for the training and deployment of ML models at scale. If you already have one, evaluate whether your current provider offers the right tooling and best user experience to enable MLOps

- Don’t reinvent the wheel — for example running ML systems on Kubernetes also requires intimate knowledge of containers, packaging, scaling, GPUs etc. However, there are cloud ready, open source solutions such as Kubeflow that leverage the benefits of Kubernetes, but eliminate the headache of deployment and provide a framework for managing complex ML systems at scale

You can find a wealth of information online, there are many detailed articles and publications that delve further into how to move to a fully automated MLOps environment. I can highly recommend “Machine Learning Design Patterns: Solutions to Common Challenges in Data Preparation, Model Building, and MLOps”

[1] VB Staff, Why Do 87% of Data Science Projects Never Make It Into Production (2019), Venture Beat

[2] D. Sculley, Gary Holt, Daniel Golovin, Eugene Davydov, Todd Phillips, Dietmar Ebner, Vinay Chaudhary, Michael Young, Jean-François Crespo, Dan Dennison, Hidden Technical Debt in Machine Learning Systems (2015), Part of Advances in Neural Information Processing Systems 28 (NIPS 2015)

“Why Machine Learning Models Crash And Burn In Production”